Botond Barta

HuAMR: A Hungarian AMR Parser and Dataset

Feb 27, 2025

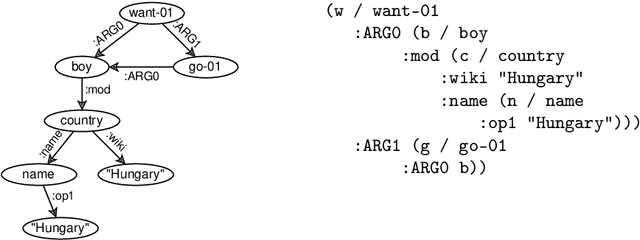

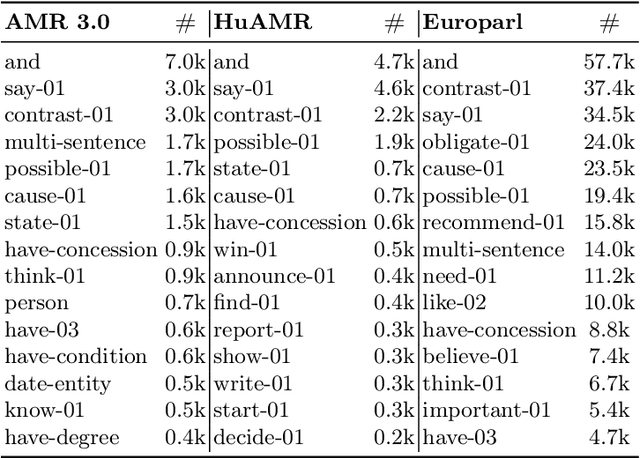

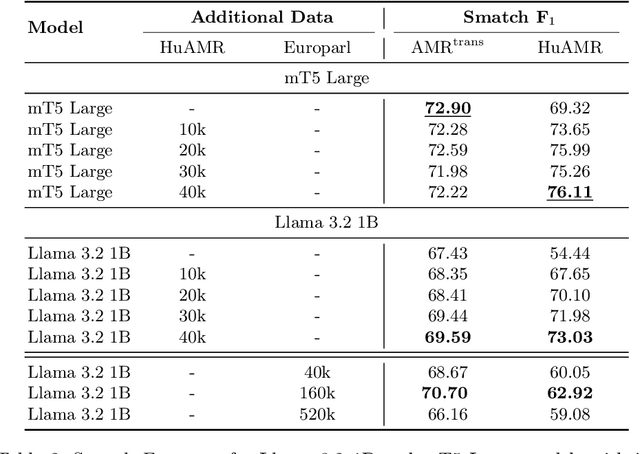

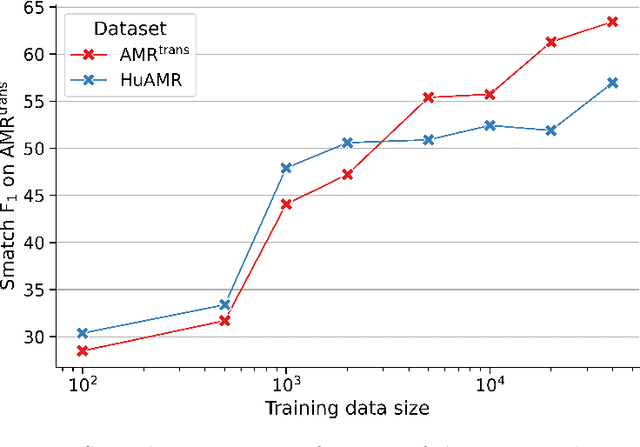

Abstract:We present HuAMR, the first Abstract Meaning Representation (AMR) dataset and a suite of large language model-based AMR parsers for Hungarian, targeting the scarcity of semantic resources for non-English languages. To create HuAMR, we employed Llama-3.1-70B to automatically generate silver-standard AMR annotations, which we then refined manually to ensure quality. Building on this dataset, we investigate how different model architectures - mT5 Large and Llama-3.2-1B - and fine-tuning strategies affect AMR parsing performance. While incorporating silver-standard AMRs from Llama-3.1-70B into the training data of smaller models does not consistently boost overall scores, our results show that these techniques effectively enhance parsing accuracy on Hungarian news data (the domain of HuAMR). We evaluate our parsers using Smatch scores and confirm the potential of HuAMR and our parsers for advancing semantic parsing research.

From News to Summaries: Building a Hungarian Corpus for Extractive and Abstractive Summarization

Apr 12, 2024Abstract:Training summarization models requires substantial amounts of training data. However for less resourceful languages like Hungarian, openly available models and datasets are notably scarce. To address this gap our paper introduces HunSum-2 an open-source Hungarian corpus suitable for training abstractive and extractive summarization models. The dataset is assembled from segments of the Common Crawl corpus undergoing thorough cleaning, preprocessing and deduplication. In addition to abstractive summarization we generate sentence-level labels for extractive summarization using sentence similarity. We train baseline models for both extractive and abstractive summarization using the collected dataset. To demonstrate the effectiveness of the trained models, we perform both quantitative and qualitative evaluation. Our dataset, models and code are publicly available, encouraging replication, further research, and real-world applications across various domains.

TreeSwap: Data Augmentation for Machine Translation via Dependency Subtree Swapping

Nov 04, 2023Abstract:Data augmentation methods for neural machine translation are particularly useful when limited amount of training data is available, which is often the case when dealing with low-resource languages. We introduce a novel augmentation method, which generates new sentences by swapping objects and subjects across bisentences. This is performed simultaneously based on the dependency parse trees of the source and target sentences. We name this method TreeSwap. Our results show that TreeSwap achieves consistent improvements over baseline models in 4 language pairs in both directions on resource-constrained datasets. We also explore domain-specific corpora, but find that our method does not make significant improvements on law, medical and IT data. We report the scores of similar augmentation methods and find that TreeSwap performs comparably. We also analyze the generated sentences qualitatively and find that the augmentation produces a correct translation in most cases. Our code is available on Github.

Data Augmentation for Machine Translation via Dependency Subtree Swapping

Jul 13, 2023

Abstract:We present a generic framework for data augmentation via dependency subtree swapping that is applicable to machine translation. We extract corresponding subtrees from the dependency parse trees of the source and target sentences and swap these across bisentences to create augmented samples. We perform thorough filtering based on graphbased similarities of the dependency trees and additional heuristics to ensure that extracted subtrees correspond to the same meaning. We conduct resource-constrained experiments on 4 language pairs in both directions using the IWSLT text translation datasets and the Hunglish2 corpus. The results demonstrate consistent improvements in BLEU score over our baseline models in 3 out of 4 language pairs. Our code is available on GitHub.

HunSum-1: an Abstractive Summarization Dataset for Hungarian

Feb 01, 2023

Abstract:We introduce HunSum-1: a dataset for Hungarian abstractive summarization, consisting of 1.14M news articles. The dataset is built by collecting, cleaning and deduplicating data from 9 major Hungarian news sites through CommonCrawl. Using this dataset, we build abstractive summarizer models based on huBERT and mT5. We demonstrate the value of the created dataset by performing a quantitative and qualitative analysis on the models' results. The HunSum-1 dataset, all models used in our experiments and our code are available open source.

A Three Step Training Approach with Data Augmentation for Morphological Inflection

Sep 14, 2021

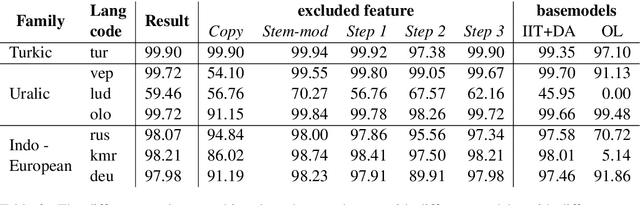

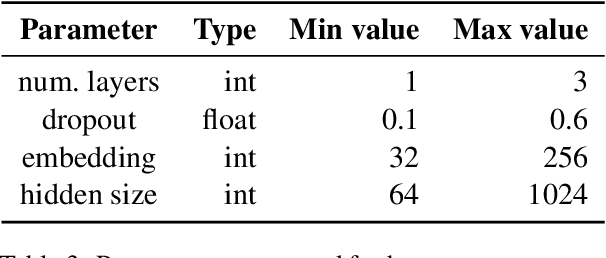

Abstract:We present the BME submission for the SIGMORPHON 2021 Task 0 Part 1, Generalization Across Typologically Diverse Languages shared task. We use an LSTM encoder-decoder model with three step training that is first trained on all languages, then fine-tuned on each language families and finally finetuned on individual languages. We use a different type of data augmentation technique in the first two steps. Our system outperformed the only other submission. Although it remains worse than the Transformer baseline released by the organizers, our model is simpler and our data augmentation techniques are easily applicable to new languages. We perform ablation studies and show that the augmentation techniques and the three training steps often help but sometimes have a negative effect.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge