Benedikt Kreis

Constrained Object Placement Using Reinforcement Learning

Apr 16, 2024

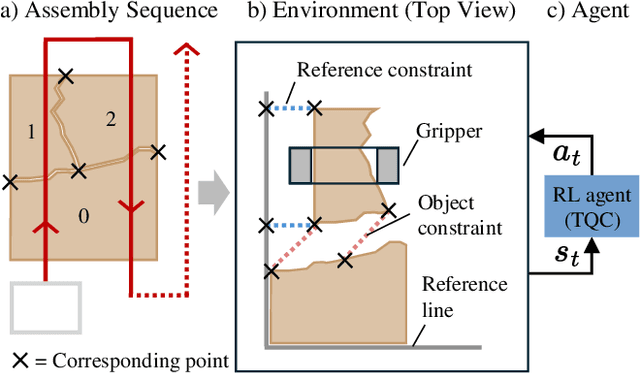

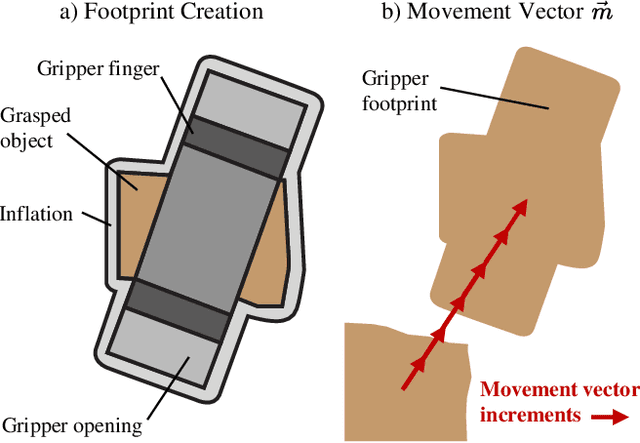

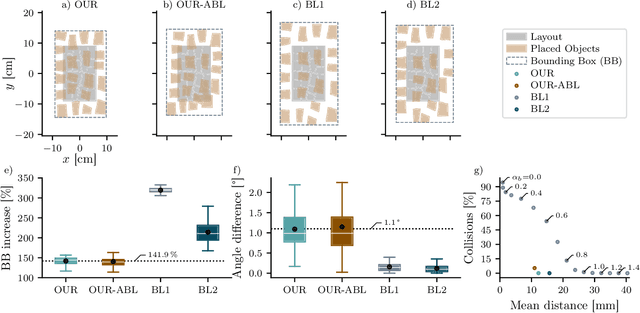

Abstract:Close and precise placement of irregularly shaped objects requires a skilled robotic system. Particularly challenging is the manipulation of objects that have sensitive top surfaces and a fixed set of neighbors. To avoid damaging the surface, they have to be grasped from the side, and during placement, their neighbor relations have to be maintained. In this work, we train a reinforcement learning agent that generates smooth end-effector motions to place objects as close as possible next to each other. During the placement, our agent considers neighbor constraints defined in a given layout of the objects while trying to avoid collisions. Our approach learns to place compact object assemblies without the need for predefined spacing between objects as required by traditional methods. We thoroughly evaluated our approach using a two-finger gripper mounted to a robotic arm with six degrees of freedom. The results show that our agent outperforms two baseline approaches in terms of object assembly compactness, thereby reducing the needed space to place the objects according to the given neighbor constraints. On average, our approach reduces the distances between all placed objects by at least 60%, with fewer collisions at the same compactness compared to both baselines.

Reactive Correction of Object Placement Errors for Robotic Arrangement Tasks

Feb 15, 2023Abstract:When arranging objects with robotic arms, the quality of the end result strongly depends on the achievable placement accuracy. However, even the most advanced robotic systems are prone to positioning errors that can occur at different steps of the manipulation process. Ignoring such errors can lead to the partial or complete failure of the arrangement. In this paper, we present a novel approach to autonomously detect and correct misplaced objects by pushing them with a robotic arm. We thoroughly tested our approach both in simulation and on real hardware using a Robotiq two-finger gripper mounted on a UR5 robotic arm. In our evaluation, we demonstrate the successful compensation for different errors injected during the manipulation of regular shaped objects. Consequently, we achieve a highly reliable object placement accuracy in the millimeter range.

Learning Depth Vision-Based Personalized Robot Navigation From Dynamic Demonstrations in Virtual Reality

Oct 04, 2022

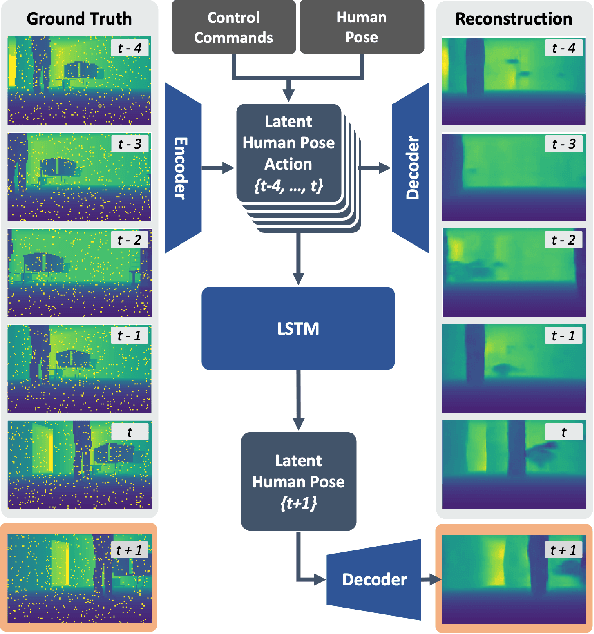

Abstract:For the best human-robot interaction experience, the robot's navigation policy should take into account personal preferences of the user. In this paper, we present a learning framework complemented by a perception pipeline to train a depth vision-based, personalized navigation controller from user demonstrations. Our refined virtual reality interface enables the demonstration of robot navigation trajectories under motion of the user for dynamic interaction scenarios. In a detailed analysis, we evaluate different configurations of the perception pipeline. As the experiments demonstrate, our new pipeline compresses the perceived depth images to a latent state representation and, thus, enables efficient reasoning about the robot's dynamic environment to the learning. We discuss the robot's navigation performance in various virtual scenes by enrolling a variational autoencoder in combination with a motion predictor and demonstrate the first personalized robot navigation controller that solely relies on depth images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge