Arnaldo Mayer

Automatic Breast Lesion Classification by Joint Neural Analysis of Mammography and Ultrasound

Sep 23, 2020

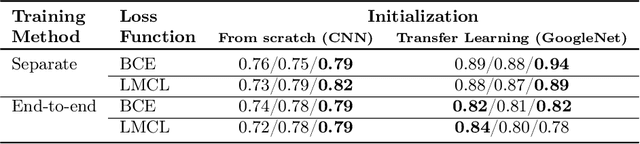

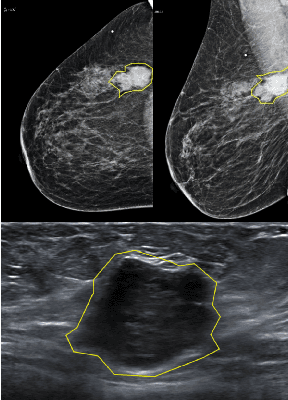

Abstract:Mammography and ultrasound are extensively used by radiologists as complementary modalities to achieve better performance in breast cancer diagnosis. However, existing computer-aided diagnosis (CAD) systems for the breast are generally based on a single modality. In this work, we propose a deep-learning based method for classifying breast cancer lesions from their respective mammography and ultrasound images. We present various approaches and show a consistent improvement in performance when utilizing both modalities. The proposed approach is based on a GoogleNet architecture, fine-tuned for our data in two training steps. First, a distinct neural network is trained separately for each modality, generating high-level features. Then, the aggregated features originating from each modality are used to train a multimodal network to provide the final classification. In quantitative experiments, the proposed approach achieves an AUC of 0.94, outperforming state-of-the-art models trained over a single modality. Moreover, it performs similarly to an average radiologist, surpassing two out of four radiologists participating in a reader study. The promising results suggest that the proposed method may become a valuable decision support tool for breast radiologists.

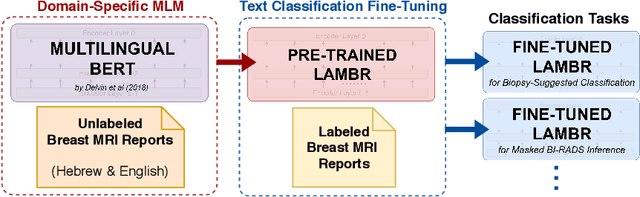

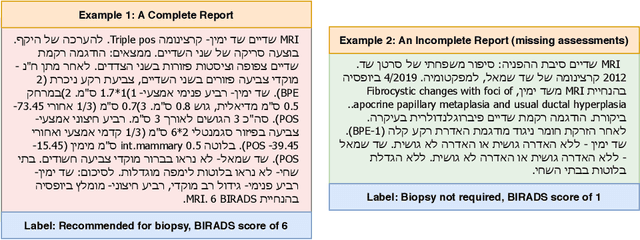

Labeling of Multilingual Breast MRI Reports

Jul 06, 2020

Abstract:Medical reports are an essential medium in recording a patient's condition throughout a clinical trial. They contain valuable information that can be extracted to generate a large labeled dataset needed for the development of clinical tools. However, the majority of medical reports are stored in an unregularized format, and a trained human annotator (typically a doctor) must manually assess and label each case, resulting in an expensive and time consuming procedure. In this work, we present a framework for developing a multilingual breast MRI report classifier using a custom-built language representation called LAMBR. Our proposed method overcomes practical challenges faced in clinical settings, and we demonstrate improved performance in extracting labels from medical reports when compared with conventional approaches.

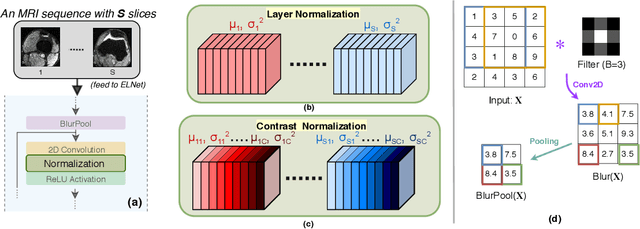

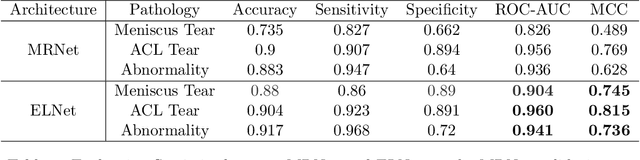

Knee Injury Detection using MRI with Efficiently-Layered Network (ELNet)

May 06, 2020

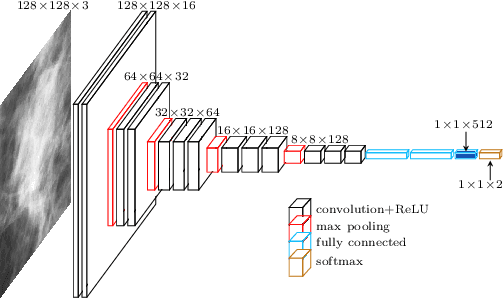

Abstract:Magnetic Resonance Imaging (MRI) is a widely-accepted imaging technique for knee injury analysis. Its advantage of capturing knee structure in three dimensions makes it the ideal tool for radiologists to locate potential tears in the knee. In order to better confront the ever growing workload of musculoskeletal (MSK) radiologists, automated tools for patients' triage are becoming a real need, reducing delays in the reading of pathological cases. In this work, we present the Efficiently-Layered Network (ELNet), a convolutional neural network (CNN) architecture optimized for the task of initial knee MRI diagnosis for triage. Unlike past approaches, we train ELNet from scratch instead of using a transfer-learning approach. The proposed method is validated quantitatively and qualitatively, and compares favorably against state-of-the-art MRNet while using a single imaging stack (axial or coronal) as input. Additionally, we demonstrate our model's capability to locate tears in the knee despite the absence of localization information during training. Lastly, the proposed model is extremely lightweight ($<$ 1MB) and therefore easy to train and deploy in real clinical settings.

Automatic detection and diagnosis of sacroiliitis in CT scans as incidental findings

Aug 14, 2019

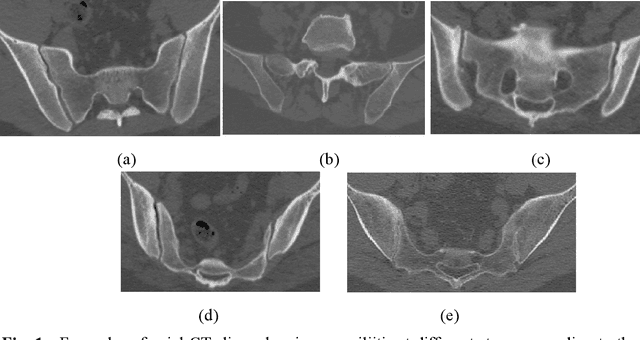

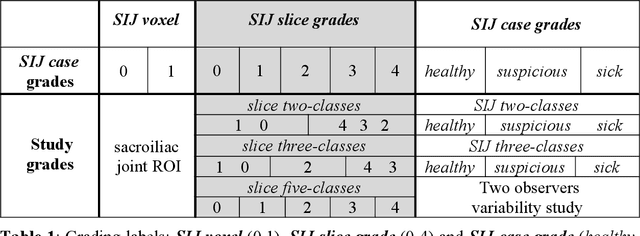

Abstract:Early diagnosis of sacroiliitis may lead to preventive treatment which can significantly improve the patient's quality of life in the long run. Oftentimes, a CT scan of the lower back or abdomen is acquired for suspected back pain. However, since the differences between a healthy and an inflamed sacroiliac joint in the early stages are subtle, the condition may be missed. We have developed a new automatic algorithm for the diagnosis and grading of sacroiliitis CT scans as incidental findings, for patients who underwent CT scanning as part of their lower back pain workout. The method is based on supervised machine and deep learning techniques. The input is a CT scan that includes the patient's pelvis. The output is a diagnosis for each sacroiliac joint. The algorithm consists of four steps: 1) computation of an initial region of interest (ROI) that includes the pelvic joints region using heuristics and a U-Net classifier; 2) refinement of the ROI to detect both sacroiliac joints using a four-tree random forest; 3) individual sacroiliitis grading of each sacroiliac joint in each CT slice with a custom slice CNN classifier, and; 4) sacroiliitis diagnosis and grading by combining the individual slice grades using a random forest. Experimental results on 484 sacroiliac joints yield a binary and a 3-class case classification accuracy of 91.9% and 86%, a sensitivity of 95% and 82%, and an Area-Under-the-Curve of 0.97 and 0.57, respectively. Automatic computer-based analysis of CT scans has the potential of being a useful method for the diagnosis and grading of sacroiliitis as an incidental finding.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge