Arjun Manoharan

Dynamic probabilistic logic models for effective abstractions in RL

Oct 15, 2021

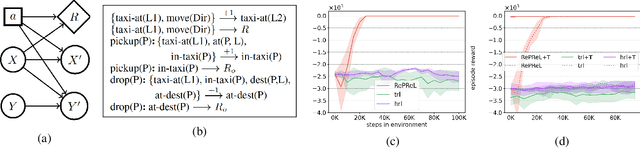

Abstract:State abstraction enables sample-efficient learning and better task transfer in complex reinforcement learning environments. Recently, we proposed RePReL (Kokel et al. 2021), a hierarchical framework that leverages a relational planner to provide useful state abstractions for learning. We present a brief overview of this framework and the use of a dynamic probabilistic logic model to design these state abstractions. Our experiments show that RePReL not only achieves better performance and efficient learning on the task at hand but also demonstrates better generalization to unseen tasks.

Option Encoder: A Framework for Discovering a Policy Basis in Reinforcement Learning

Sep 09, 2019

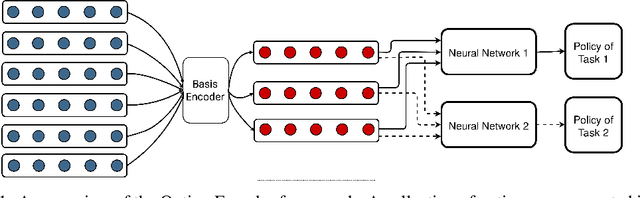

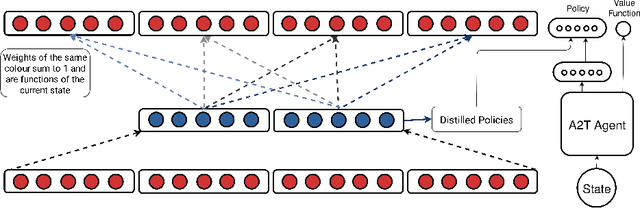

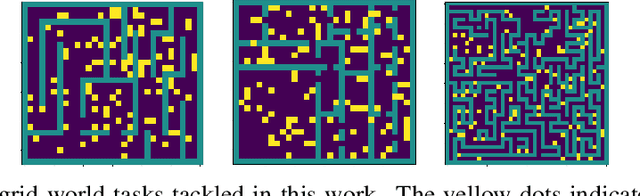

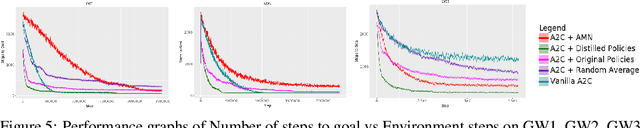

Abstract:Option discovery and skill acquisition frameworks are integral to the functioning of a Hierarchically organized Reinforcement learning agent. However, such techniques often yield a large number of options or skills, which can potentially be represented succinctly by filtering out any redundant information. Such a reduction can reduce the required computation while also improving the performance on a target task. In order to compress an array of option policies, we attempt to find a policy basis that accurately captures the set of all options. In this work, we propose Option Encoder, an auto-encoder based framework with intelligently constrained weights, that helps discover a collection of basis policies. The policy basis can be used as a proxy for the original set of skills in a suitable hierarchically organized framework. We demonstrate the efficacy of our method on a collection of grid-worlds and on the high-dimensional Fetch-Reach robotic manipulation task by evaluating the obtained policy basis on a set of downstream tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge