Ari Juels

Props for Machine-Learning Security

Oct 27, 2024

Abstract:We propose protected pipelines or props for short, a new approach for authenticated, privacy-preserving access to deep-web data for machine learning (ML). By permitting secure use of vast sources of deep-web data, props address the systemic bottleneck of limited high-quality training data in ML development. Props also enable privacy-preserving and trustworthy forms of inference, allowing for safe use of sensitive data in ML applications. Props are practically realizable today by leveraging privacy-preserving oracle systems initially developed for blockchain applications.

Stealing Machine Learning Models via Prediction APIs

Oct 03, 2016

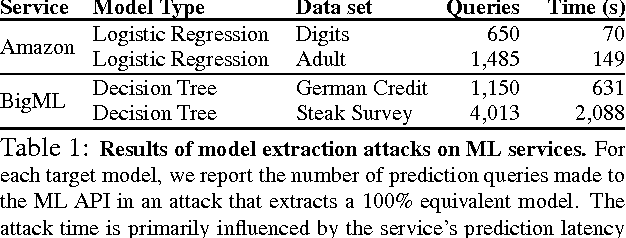

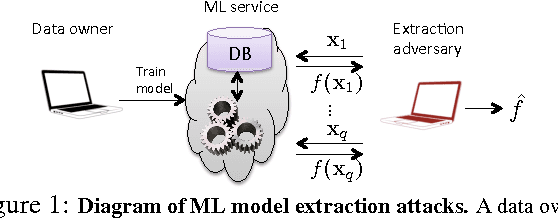

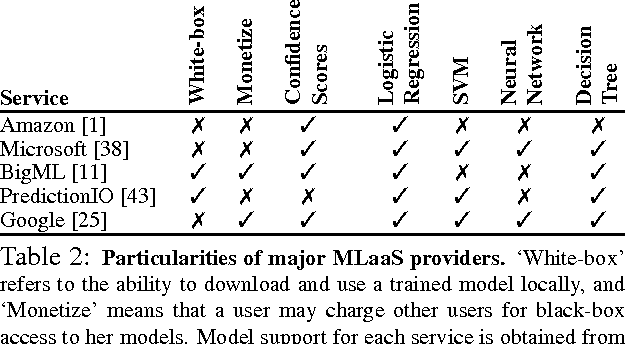

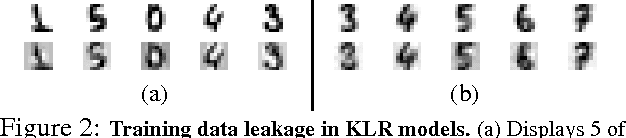

Abstract:Machine learning (ML) models may be deemed confidential due to their sensitive training data, commercial value, or use in security applications. Increasingly often, confidential ML models are being deployed with publicly accessible query interfaces. ML-as-a-service ("predictive analytics") systems are an example: Some allow users to train models on potentially sensitive data and charge others for access on a pay-per-query basis. The tension between model confidentiality and public access motivates our investigation of model extraction attacks. In such attacks, an adversary with black-box access, but no prior knowledge of an ML model's parameters or training data, aims to duplicate the functionality of (i.e., "steal") the model. Unlike in classical learning theory settings, ML-as-a-service offerings may accept partial feature vectors as inputs and include confidence values with predictions. Given these practices, we show simple, efficient attacks that extract target ML models with near-perfect fidelity for popular model classes including logistic regression, neural networks, and decision trees. We demonstrate these attacks against the online services of BigML and Amazon Machine Learning. We further show that the natural countermeasure of omitting confidence values from model outputs still admits potentially harmful model extraction attacks. Our results highlight the need for careful ML model deployment and new model extraction countermeasures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge