Aoxiang Ning

DFDNet: Dynamic Frequency-Guided De-Flare Network

Jul 23, 2025

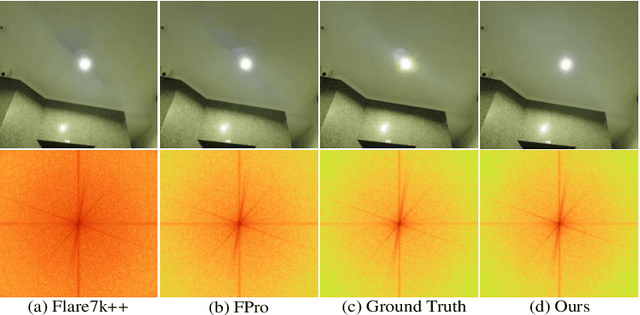

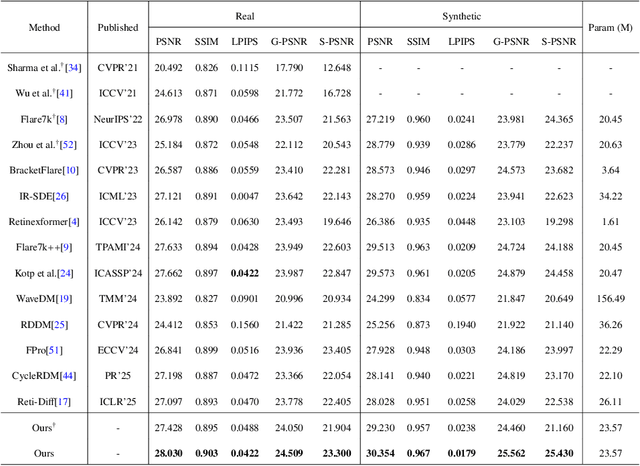

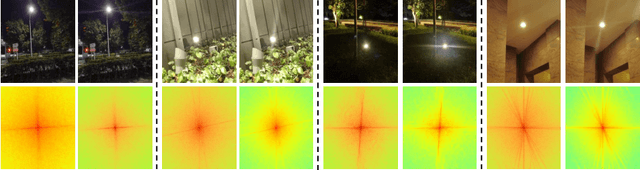

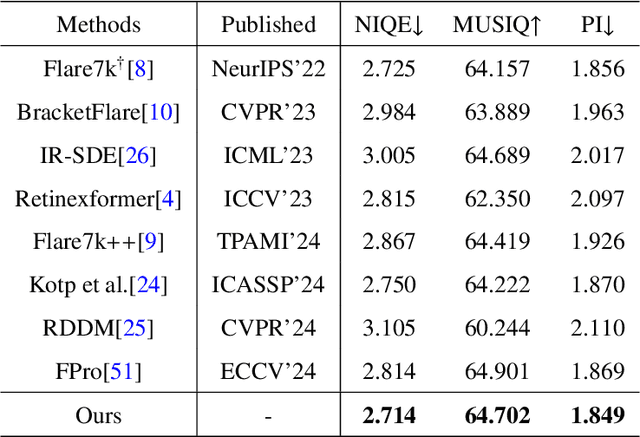

Abstract:Strong light sources in nighttime photography frequently produce flares in images, significantly degrading visual quality and impacting the performance of downstream tasks. While some progress has been made, existing methods continue to struggle with removing large-scale flare artifacts and repairing structural damage in regions near the light source. We observe that these challenging flare artifacts exhibit more significant discrepancies from the reference images in the frequency domain compared to the spatial domain. Therefore, this paper presents a novel dynamic frequency-guided deflare network (DFDNet) that decouples content information from flare artifacts in the frequency domain, effectively removing large-scale flare artifacts. Specifically, DFDNet consists mainly of a global dynamic frequency-domain guidance (GDFG) module and a local detail guidance module (LDGM). The GDFG module guides the network to perceive the frequency characteristics of flare artifacts by dynamically optimizing global frequency domain features, effectively separating flare information from content information. Additionally, we design an LDGM via a contrastive learning strategy that aligns the local features of the light source with the reference image, reduces local detail damage from flare removal, and improves fine-grained image restoration. The experimental results demonstrate that the proposed method outperforms existing state-of-the-art methods in terms of performance. The code is available at \href{https://github.com/AXNing/DFDNet}{https://github.com/AXNing/DFDNet}.

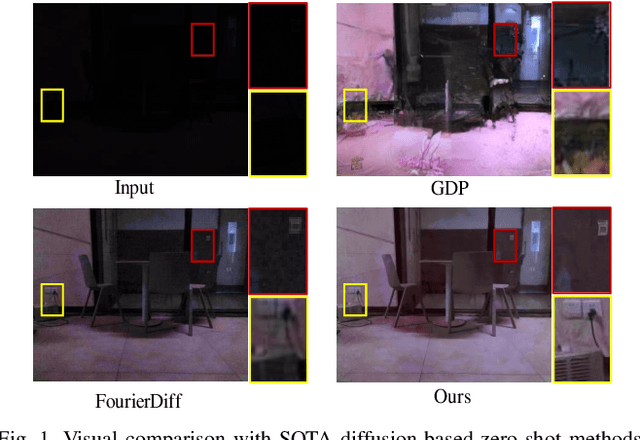

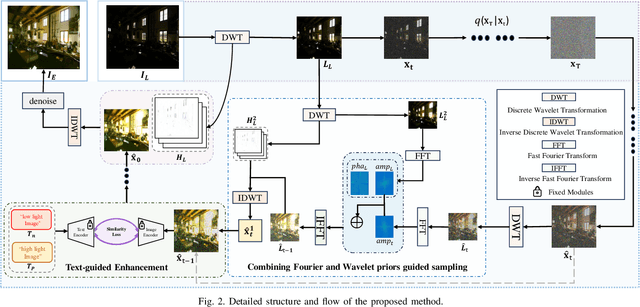

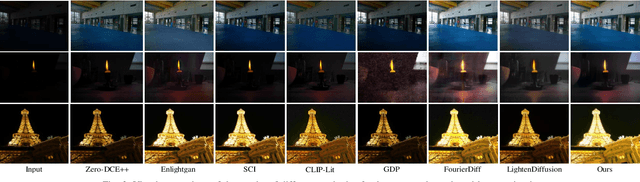

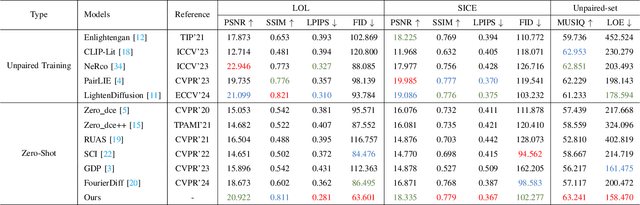

Zero-Shot Low-Light Image Enhancement via Joint Frequency Domain Priors Guided Diffusion

Nov 21, 2024

Abstract:Due to the singularity of real-world paired datasets and the complexity of low-light environments, this leads to supervised methods lacking a degree of scene generalisation. Meanwhile, limited by poor lighting and content guidance, existing zero-shot methods cannot handle unknown severe degradation well. To address this problem, we will propose a new zero-shot low-light enhancement method to compensate for the lack of light and structural information in the diffusion sampling process by effectively combining the wavelet and Fourier frequency domains to construct rich a priori information. The key to the inspiration comes from the similarity between the wavelet and Fourier frequency domains: both light and structure information are closely related to specific frequency domain regions, respectively. Therefore, by transferring the diffusion process to the wavelet low-frequency domain and combining the wavelet and Fourier frequency domains by continuously decomposing them in the inverse process, the constructed rich illumination prior is utilised to guide the image generation enhancement process. Sufficient experiments show that the framework is robust and effective in various scenarios. The code will be available at: \href{https://github.com/hejh8/Joint-Wavelet-and-Fourier-priors-guided-diffusion}{https://github.com/hejh8/Joint-Wavelet-and-Fourier-priors-guided-diffusion}.

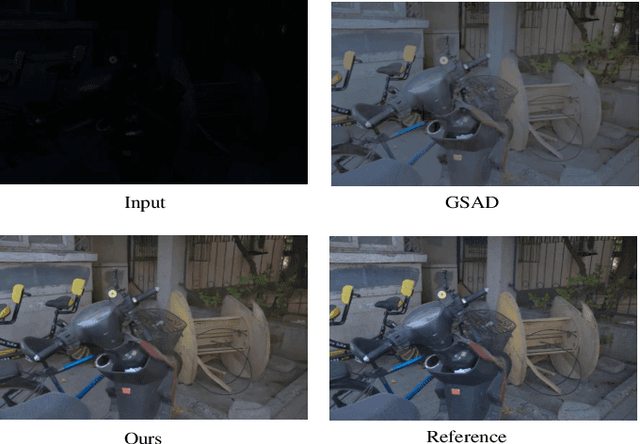

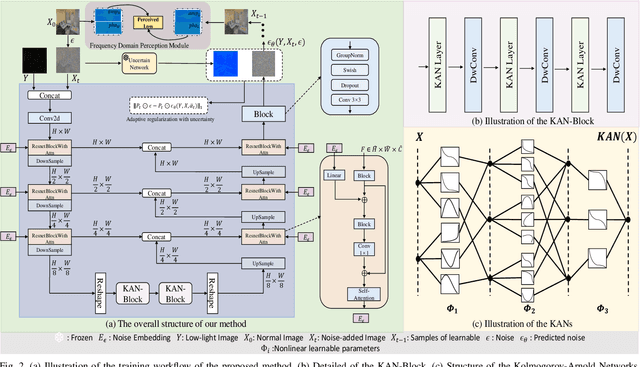

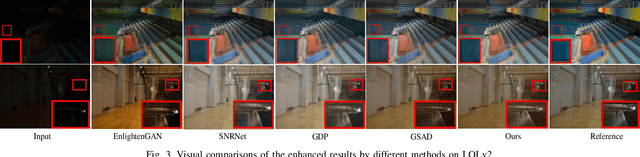

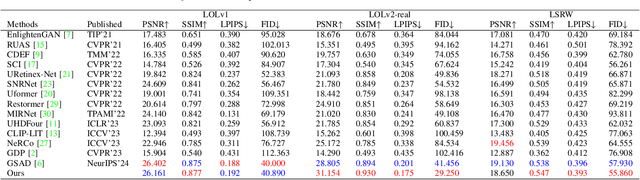

KAN See In the Dark

Sep 05, 2024

Abstract:Existing low-light image enhancement methods are difficult to fit the complex nonlinear relationship between normal and low-light images due to uneven illumination and noise effects. The recently proposed Kolmogorov-Arnold networks (KANs) feature spline-based convolutional layers and learnable activation functions, which can effectively capture nonlinear dependencies. In this paper, we design a KAN-Block based on KANs and innovatively apply it to low-light image enhancement. This method effectively alleviates the limitations of current methods constrained by linear network structures and lack of interpretability, further demonstrating the potential of KANs in low-level vision tasks. Given the poor perception of current low-light image enhancement methods and the stochastic nature of the inverse diffusion process, we further introduce frequency-domain perception for visually oriented enhancement. Extensive experiments demonstrate the competitive performance of our method on benchmark datasets. The code will be available at: https://github.com/AXNing/KSID}{https://github.com/AXNing/KSID.

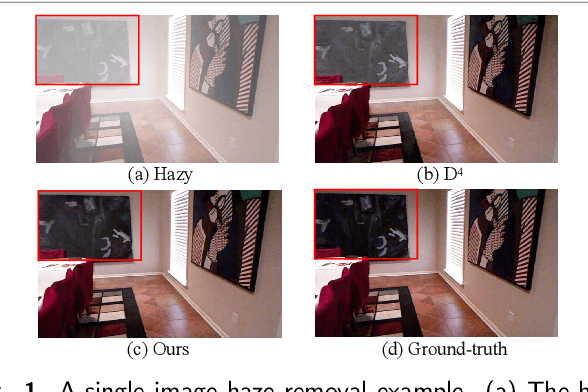

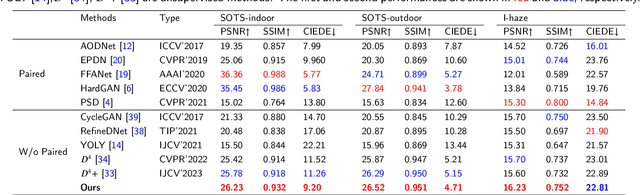

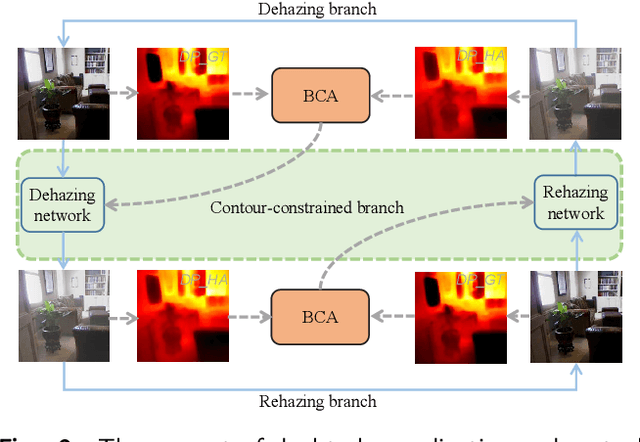

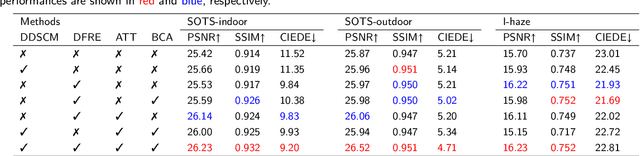

Addressing Domain Discrepancy: A Dual-branch Collaborative Model to Unsupervised Dehazing

Jul 14, 2024

Abstract:Although synthetic data can alleviate acquisition challenges in image dehazing tasks, it also introduces the problem of domain bias when dealing with small-scale data. This paper proposes a novel dual-branch collaborative unpaired dehazing model (DCM-dehaze) to address this issue. The proposed method consists of two collaborative branches: dehazing and contour constraints. Specifically, we design a dual depthwise separable convolutional module (DDSCM) to enhance the information expressiveness of deeper features and the correlation to shallow features. In addition, we construct a bidirectional contour function to optimize the edge features of the image to enhance the clarity and fidelity of the image details. Furthermore, we present feature enhancers via a residual dense architecture to eliminate redundant features of the dehazing process and further alleviate the domain deviation problem. Extensive experiments on benchmark datasets show that our method reaches the state-of-the-art. This project code will be available at \url{https://github.com/Fan-pixel/DCM-dehaze.

Artistic-style text detector and a new Movie-Poster dataset

Jun 24, 2024Abstract:Although current text detection algorithms demonstrate effectiveness in general scenarios, their performance declines when confronted with artistic-style text featuring complex structures. This paper proposes a method that utilizes Criss-Cross Attention and residual dense block to address the incomplete and misdiagnosis of artistic-style text detection by current algorithms. Specifically, our method mainly consists of a feature extraction backbone, a feature enhancement network, a multi-scale feature fusion module, and a boundary discrimination module. The feature enhancement network significantly enhances the model's perceptual capabilities in complex environments by fusing horizontal and vertical contextual information, allowing it to capture detailed features overlooked in artistic-style text. We incorporate residual dense block into the Feature Pyramid Network to suppress the effect of background noise during feature fusion. Aiming to omit the complex post-processing, we explore a boundary discrimination module that guides the correct generation of boundary proposals. Furthermore, given that movie poster titles often use stylized art fonts, we collected a Movie-Poster dataset to address the scarcity of artistic-style text data. Extensive experiments demonstrate that our proposed method performs superiorly on the Movie-Poster dataset and produces excellent results on multiple benchmark datasets. The code and the Movie-Poster dataset will be available at: https://github.com/biedaxiaohua/Artistic-style-text-detection

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge