Anthony Cimorelli

Self-Supervised Learning of Gait-Based Biomarkers

Jul 30, 2023

Abstract:Markerless motion capture (MMC) is revolutionizing gait analysis in clinical settings by making it more accessible, raising the question of how to extract the most clinically meaningful information from gait data. In multiple fields ranging from image processing to natural language processing, self-supervised learning (SSL) from large amounts of unannotated data produces very effective representations for downstream tasks. However, there has only been limited use of SSL to learn effective representations of gait and movement, and it has not been applied to gait analysis with MMC. One SSL objective that has not been applied to gait is contrastive learning, which finds representations that place similar samples closer together in the learned space. If the learned similarity metric captures clinically meaningful differences, this could produce a useful representation for many downstream clinical tasks. Contrastive learning can also be combined with causal masking to predict future timesteps, which is an appealing SSL objective given the dynamical nature of gait. We applied these techniques to gait analyses performed with MMC in a rehabilitation hospital from a diverse clinical population. We find that contrastive learning on unannotated gait data learns a representation that captures clinically meaningful information. We probe this learned representation using the framework of biomarkers and show it holds promise as both a diagnostic and response biomarker, by showing it can accurately classify diagnosis from gait and is responsive to inpatient therapy, respectively. We ultimately hope these learned representations will enable predictive and prognostic gait-based biomarkers that can facilitate precision rehabilitation through greater use of MMC to quantify movement in rehabilitation.

* Accepted to Ambient Inteligence for Healthcare workshop at MICCAI 2023

Markerless Motion Capture and Biomechanical Analysis Pipeline

Mar 19, 2023

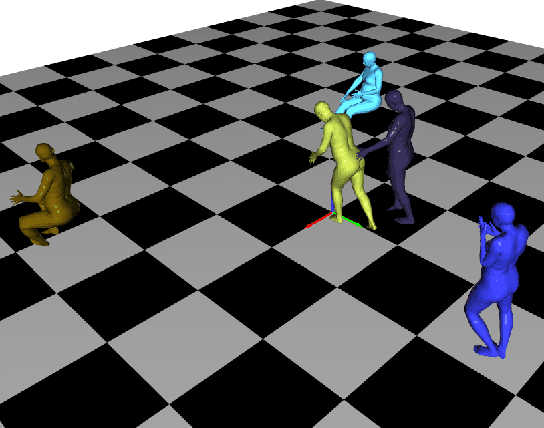

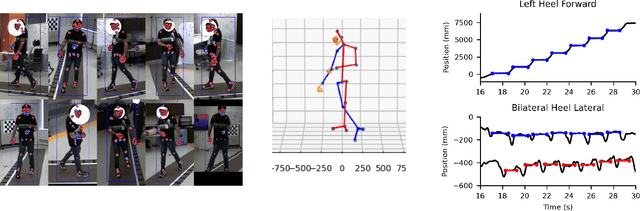

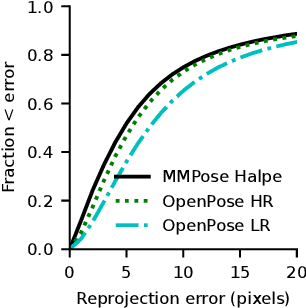

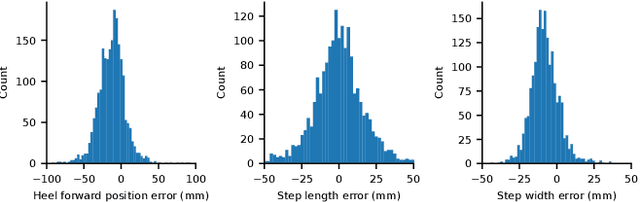

Abstract:Markerless motion capture using computer vision and human pose estimation (HPE) has the potential to expand access to precise movement analysis. This could greatly benefit rehabilitation by enabling more accurate tracking of outcomes and providing more sensitive tools for research. There are numerous steps between obtaining videos to extracting accurate biomechanical results and limited research to guide many critical design decisions in these pipelines. In this work, we analyze several of these steps including the algorithm used to detect keypoints and the keypoint set, the approach to reconstructing trajectories for biomechanical inverse kinematics and optimizing the IK process. Several features we find important are: 1) using a recent algorithm trained on many datasets that produces a dense set of biomechanically-motivated keypoints, 2) using an implicit representation to reconstruct smooth, anatomically constrained marker trajectories for IK, 3) iteratively optimizing the biomechanical model to match the dense markers, 4) appropriate regularization of the IK process. Our pipeline makes it easy to obtain accurate biomechanical estimates of movement in a rehabilitation hospital.

Improved Trajectory Reconstruction for Markerless Pose Estimation

Mar 08, 2023

Abstract:Markerless pose estimation allows reconstructing human movement from multiple synchronized and calibrated views, and has the potential to make movement analysis easy and quick, including gait analysis. This could enable much more frequent and quantitative characterization of gait impairments, allowing better monitoring of outcomes and responses to interventions. However, the impact of different keypoint detectors and reconstruction algorithms on markerless pose estimation accuracy has not been thoroughly evaluated. We tested these algorithmic choices on data acquired from a multicamera system from a heterogeneous sample of 25 individuals seen in a rehabilitation hospital. We found that using a top-down keypoint detector and reconstructing trajectories with an implicit function enabled accurate, smooth and anatomically plausible trajectories, with a noise in the step width estimates compared to a GaitRite walkway of only 8mm.

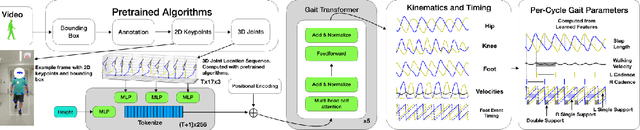

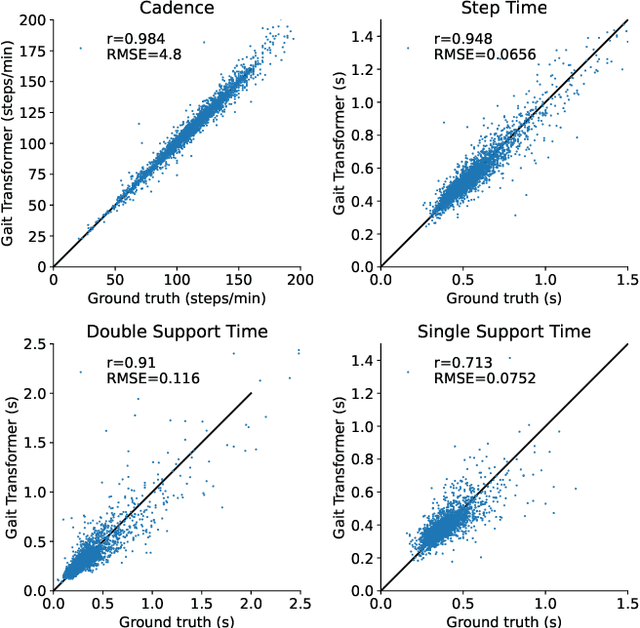

Transforming Gait: Video-Based Spatiotemporal Gait Analysis

Mar 17, 2022

Abstract:Human pose estimation from monocular video is a rapidly advancing field that offers great promise to human movement science and rehabilitation. This potential is tempered by the smaller body of work ensuring the outputs are clinically meaningful and properly calibrated. Gait analysis, typically performed in a dedicated lab, produces precise measurements including kinematics and step timing. Using over 7000 monocular video from an instrumented gait analysis lab, we trained a neural network to map 3D joint trajectories and the height of individuals onto interpretable biomechanical outputs including gait cycle timing and sagittal plane joint kinematics and spatiotemporal trajectories. This task specific layer produces accurate estimates of the timing of foot contact and foot off events. After parsing the kinematic outputs into individual gait cycles, it also enables accurate cycle-by-cycle estimates of cadence, step time, double and single support time, walking speed and step length.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge