Annika Bremhorst

DogFLW: Dog Facial Landmarks in the Wild Dataset

May 19, 2024Abstract:Affective computing for animals is a rapidly expanding research area that is going deeper than automated movement tracking to address animal internal states, like pain and emotions. Facial expressions can serve to communicate information about these states in mammals. However, unlike human-related studies, there is a significant shortage of datasets that would enable the automated analysis of animal facial expressions. Inspired by the recently introduced Cat Facial Landmarks in the Wild dataset, presenting cat faces annotated with 48 facial anatomy-based landmarks, in this paper, we develop an analogous dataset containing 3,274 annotated images of dogs. Our dataset is based on a scheme of 46 facial anatomy-based landmarks. The DogFLW dataset is available from the corresponding author upon a reasonable request.

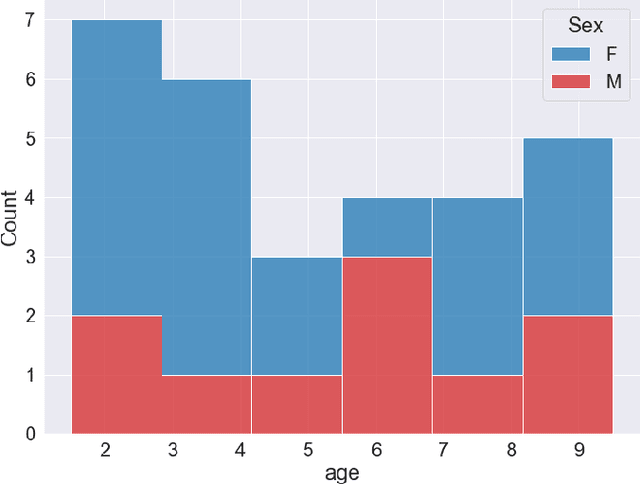

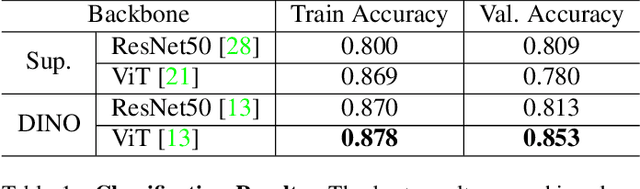

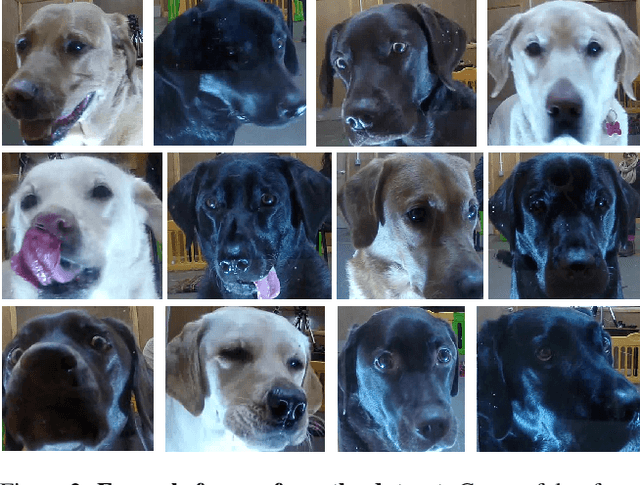

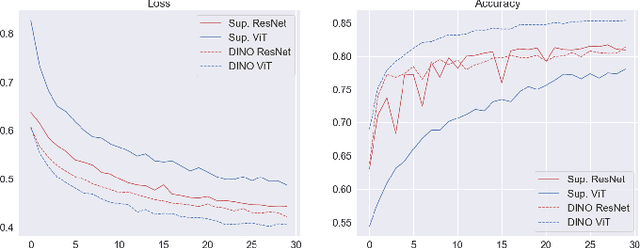

Deep Learning Models for Automated Classification of Dog Emotional States from Facial Expressions

Jun 11, 2022

Abstract:Similarly to humans, facial expressions in animals are closely linked with emotional states. However, in contrast to the human domain, automated recognition of emotional states from facial expressions in animals is underexplored, mainly due to difficulties in data collection and establishment of ground truth concerning emotional states of non-verbal users. We apply recent deep learning techniques to classify (positive) anticipation and (negative) frustration of dogs on a dataset collected in a controlled experimental setting. We explore the suitability of different backbones (e.g. ResNet, ViT) under different supervisions to this task, and find that features of a self-supervised pretrained ViT (DINO-ViT) are superior to the other alternatives. To the best of our knowledge, this work is the first to address the task of automatic classification of canine emotions on data acquired in a controlled experiment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge