Get our free extension to see links to code for papers anywhere online!Free add-on: code for papers everywhere!Free add-on: See code for papers anywhere!

Anna Brugger

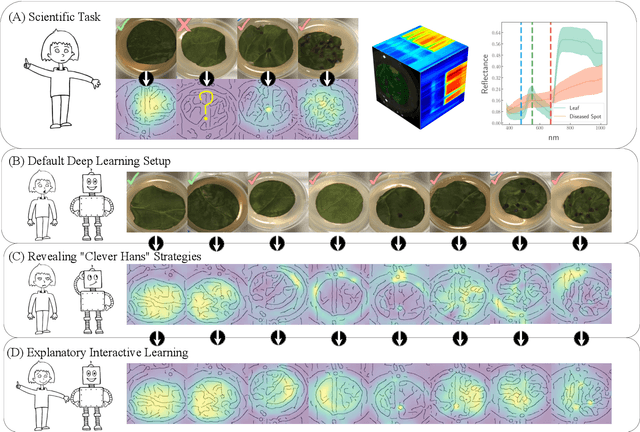

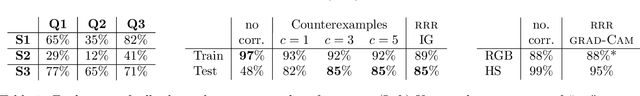

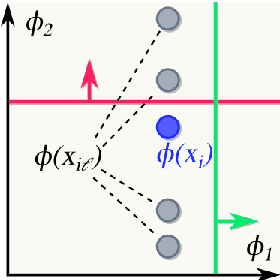

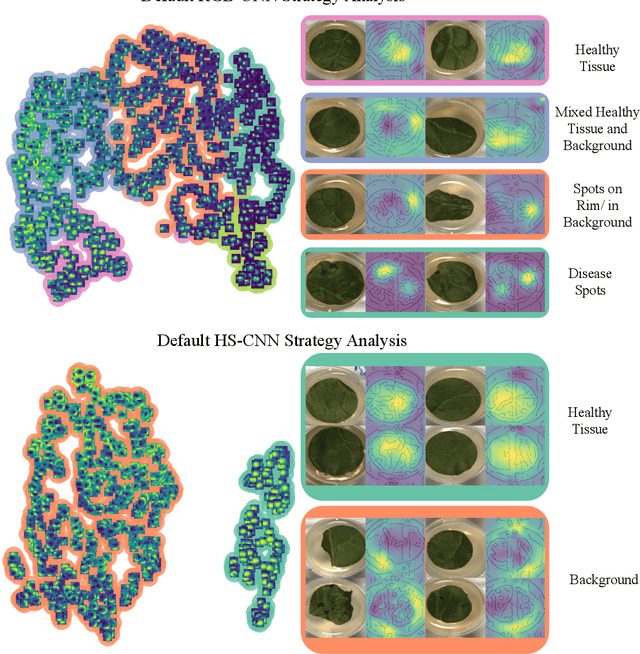

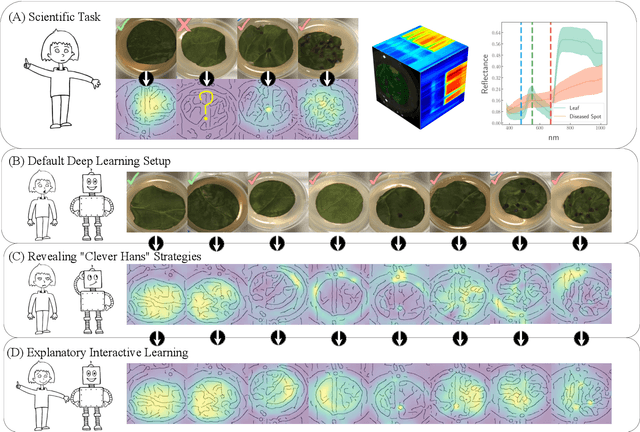

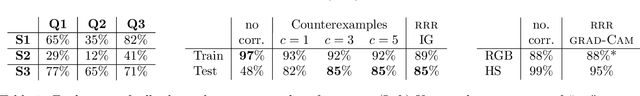

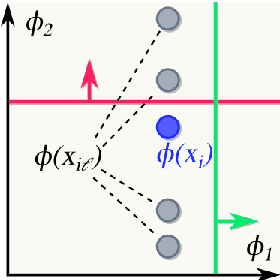

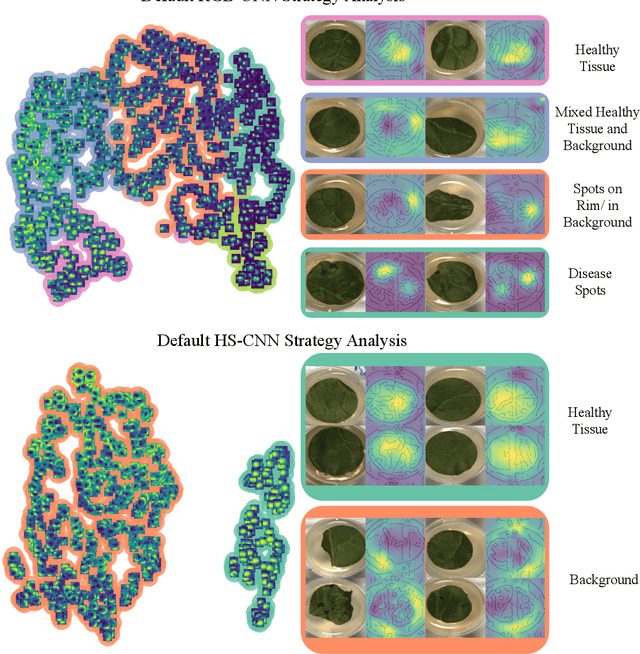

Right for the Wrong Scientific Reasons: Revising Deep Networks by Interacting with their Explanations

Jan 31, 2020Authors:Patrick Schramowski, Wolfgang Stammer, Stefano Teso, Anna Brugger, Xiaoting Shao, Hans-Georg Luigs, Anne-Katrin Mahlein, Kristian Kersting

Figures and Tables:

Abstract:Deep neural networks have shown excellent performances in many real-world applications. Unfortunately, they may show "Clever Hans"-like behavior---making use of confounding factors within datasets---to achieve high performance. In this work we introduce the novel learning setting of "explanatory interactive learning" (XIL) and illustrate its benefits on a plant phenotyping research task. XIL adds the scientist into the training loop such that she interactively revises the original model via providing feedback on its explanations. Our experimental results demonstrate that XIL can help avoiding Clever Hans moments in machine learning and encourages (or discourages, if appropriate) trust into the underlying model.

* arXiv admin note: text overlap with arXiv:1805.08578

Via

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge