Anil Kokaram

LiteVPNet: A Lightweight Network for Video Encoding Control in Quality-Critical Applications

Oct 14, 2025Abstract:In the last decade, video workflows in the cinema production ecosystem have presented new use cases for video streaming technology. These new workflows, e.g. in On-set Virtual Production, present the challenge of requiring precise quality control and energy efficiency. Existing approaches to transcoding often fall short of these requirements, either due to a lack of quality control or computational overhead. To fill this gap, we present a lightweight neural network (LiteVPNet) for accurately predicting Quantisation Parameters for NVENC AV1 encoders that achieve a specified VMAF score. We use low-complexity features, including bitstream characteristics, video complexity measures, and CLIP-based semantic embeddings. Our results demonstrate that LiteVPNet achieves mean VMAF errors below 1.2 points across a wide range of quality targets. Notably, LiteVPNet achieves VMAF errors within 2 points for over 87% of our test corpus, c.f. approx 61% with state-of-the-art methods. LiteVPNet's performance across various quality regions highlights its applicability for enhancing high-value content transport and streaming for more energy-efficient, high-quality media experiences.

An Empirical Study of Reducing AV1 Decoder Complexity and Energy Consumption via Encoder Parameter Tuning

Oct 14, 2025Abstract:The widespread adoption of advanced video codecs such as AV1 is often hindered by their high decoding complexity, posing a challenge for battery-constrained devices. While encoders can be configured to produce bitstreams that are decoder-friendly, estimating the decoding complexity and energy overhead for a given video is non-trivial. In this study, we systematically analyse the impact of disabling various coding tools and adjusting coding parameters in two AV1 encoders, libaom-av1 and SVT-AV1. Using system-level energy measurement tools like RAPL (Running Average Power Limit), Intel SoC Watch (integrated with VTune profiler), we quantify the resulting trade-offs between decoding complexity, energy consumption, and compression efficiency for decoding a bitstream. Our results demonstrate that specific encoder configurations can substantially reduce decoding complexity with minimal perceptual quality degradation. For libaom-av1, disabling CDEF, an in-loop filter gives us a mean reduction in decoding cycles by 10%. For SVT-AV1, using the in-built, fast-decode=2 preset achieves a more substantial 24% reduction in decoding cycles. These findings provide strategies for content providers to lower the energy footprint of AV1 video streaming.

An Efficient Quality Metric for Video Frame Interpolation Based on Motion-Field Divergence

Oct 01, 2025Abstract:Video frame interpolation is a fundamental tool for temporal video enhancement, but existing quality metrics struggle to evaluate the perceptual impact of interpolation artefacts effectively. Metrics like PSNR, SSIM and LPIPS ignore temporal coherence. State-of-the-art quality metrics tailored towards video frame interpolation, like FloLPIPS, have been developed but suffer from computational inefficiency that limits their practical application. We present $\text{PSNR}_{\text{DIV}}$, a novel full-reference quality metric that enhances PSNR through motion divergence weighting, a technique adapted from archival film restoration where it was developed to detect temporal inconsistencies. Our approach highlights singularities in motion fields which is then used to weight image errors. Evaluation on the BVI-VFI dataset (180 sequences across multiple frame rates, resolutions and interpolation methods) shows $\text{PSNR}_{\text{DIV}}$ achieves statistically significant improvements: +0.09 Pearson Linear Correlation Coefficient over FloLPIPS, while being 2.5$\times$ faster and using 4$\times$ less memory. Performance remains consistent across all content categories and are robust to the motion estimator used. The efficiency and accuracy of $\text{PSNR}_{\text{DIV}}$ enables fast quality evaluation and practical use as a loss function for training neural networks for video frame interpolation tasks. An implementation of our metric is available at www.github.com/conalld/psnr-div.

A new dataset and comparison for multi-camera frame synthesis

Aug 12, 2025Abstract:Many methods exist for frame synthesis in image sequences but can be broadly categorised into frame interpolation and view synthesis techniques. Fundamentally, both frame interpolation and view synthesis tackle the same task, interpolating a frame given surrounding frames in time or space. However, most frame interpolation datasets focus on temporal aspects with single cameras moving through time and space, while view synthesis datasets are typically biased toward stereoscopic depth estimation use cases. This makes direct comparison between view synthesis and frame interpolation methods challenging. In this paper, we develop a novel multi-camera dataset using a custom-built dense linear camera array to enable fair comparison between these approaches. We evaluate classical and deep learning frame interpolators against a view synthesis method (3D Gaussian Splatting) for the task of view in-betweening. Our results reveal that deep learning methods do not significantly outperform classical methods on real image data, with 3D Gaussian Splatting actually underperforming frame interpolators by as much as 3.5 dB PSNR. However, in synthetic scenes, the situation reverses -- 3D Gaussian Splatting outperforms frame interpolation algorithms by almost 5 dB PSNR at a 95% confidence level.

Efficient motion-based metrics for video frame interpolation

Aug 12, 2025Abstract:Video frame interpolation (VFI) offers a way to generate intermediate frames between consecutive frames of a video sequence. Although the development of advanced frame interpolation algorithms has received increased attention in recent years, assessing the perceptual quality of interpolated content remains an ongoing area of research. In this paper, we investigate simple ways to process motion fields, with the purposes of using them as video quality metric for evaluating frame interpolation algorithms. We evaluate these quality metrics using the BVI-VFI dataset which contains perceptual scores measured for interpolated sequences. From our investigation we propose a motion metric based on measuring the divergence of motion fields. This metric correlates reasonably with these perceptual scores (PLCC=0.51) and is more computationally efficient (x2.7 speedup) compared to FloLPIPS (a well known motion-based metric). We then use our new proposed metrics to evaluate a range of state of the art frame interpolation metrics and find our metrics tend to favour more perceptual pleasing interpolated frames that may not score highly in terms of PSNR or SSIM.

Demystifying the use of Compression in Virtual Production

Nov 01, 2024Abstract:Virtual Production (VP) technologies have continued to improve the flexibility of on-set filming and enhance the live concert experience. The core technology of VP relies on high-resolution, high-brightness LED panels to playback/render video content. There are a number of technical challenges to effective deployment e.g. image tile synchronisation across the panels, cross panel colour balancing and compensating for colour fluctuations due to changes in camera angles. Given the complexity and potential quality degradation, the industry prefers "pristine" or lossless compressed source material for displays, which requires significant storage and bandwidth. Modern lossy compression standards like AV1 or H.265 could maintain the same quality at significantly lower bitrates and resource demands. There is yet no agreed methodology for assessing the impact of these standards on quality when the VP scene is recorded in-camera. We present a methodology to assess this impact by comparing lossless and lossy compressed footage displayed through VP screens and recorded in-camera. We assess the quality impact of HAP/NotchLC/Daniel2 and AV1/HEVC/H.264 compression bitrates from 2 Mb/s to 2000 Mb/s with various GOP sizes. Several perceptual quality metrics are then used to automatically evaluate in-camera picture quality, referencing the original uncompressed source content through the LED wall. Our results show that we can achieve the same quality with hybrid codecs as with intermediate encoders at orders of magnitude less bitrate and storage requirements.

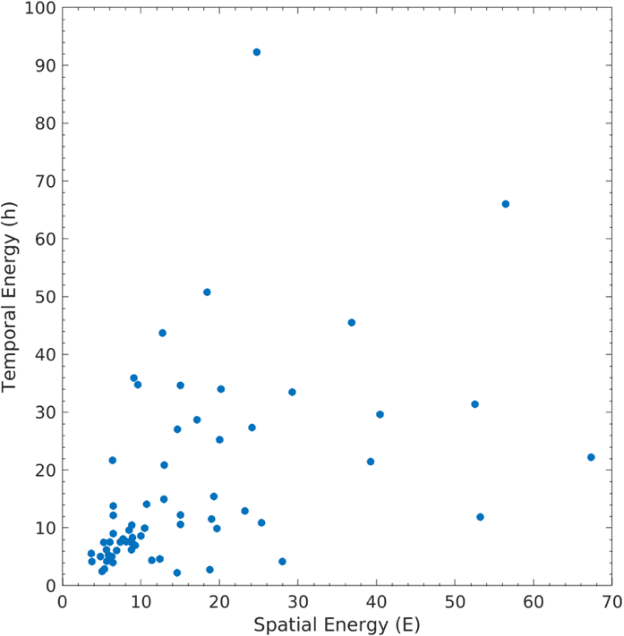

Predicting total time to compress a video corpus using online inference systems

Oct 23, 2024Abstract:Predicting the computational cost of compressing/transcoding clips in a video corpus is important for resource management of cloud services and VOD (Video On Demand) providers. Currently, customers of cloud video services are unaware of the cost of transcoding their files until the task is completed. Previous work concentrated on predicting perclip compression time, and thus estimating the cost of video compression. In this work, we propose new Machine Learning (ML) systems which predict cost for the entire corpus instead. This is a more appropriate goal since users are not interested in per-clip cost but instead the cost for the whole corpus. In this work, we evaluate our systems with respect to two video codecs (x264, x265) and a novel high-quality video corpus. We find that the accuracy of aggregate time prediction for a video corpus more than two times better than using per-clip predictions. Furthermore, we present an online inference framework in which we update the ML models as files are processed. A consideration of video compute overhead and appropriate choice of ML predictor for each fraction of corpus completed yields a prediction error of less than 5%. This is approximately two times better than previous work which proposed generalised predictors.

A Sharpness Based Loss Function for Removing Out-of-Focus Blur

Aug 12, 2024Abstract:The success of modern Deep Neural Network (DNN) approaches can be attributed to the use of complex optimization criteria beyond standard losses such as mean absolute error (MAE) or mean squared error (MSE). In this work, we propose a novel method of utilising a no-reference sharpness metric Q introduced by Zhu and Milanfar for removing out-of-focus blur from images. We also introduce a novel dataset of real-world out-of-focus images for assessing restoration models. Our fine-tuned method produces images with a 7.5 % increase in perceptual quality (LPIPS) as compared to a standard model trained only on MAE. Furthermore, we observe a 6.7 % increase in Q (reflecting sharper restorations) and 7.25 % increase in PSNR over most state-of-the-art (SOTA) methods.

A Dictionary Based Approach for Removing Out-of-Focus Blur

Jun 17, 2024Abstract:The field of image deblurring has seen tremendous progress with the rise of deep learning models. These models, albeit efficient, are computationally expensive and energy consuming. Dictionary based learning approaches have shown promising results in image denoising and Single Image Super-Resolution. We propose an extension of the Rapid and Accurate Image Super-Resolution (RAISR) algorithm introduced by Isidoro, Romano and Milanfar for the task of out-of-focus blur removal. We define a sharpness quality measure which aligns well with the perceptual quality of an image. A metric based blending strategy based on asset allocation management is also proposed. Our method demonstrates an average increase of approximately 13% (PSNR) and 10% (SSIM) compared to popular deblurring methods. Furthermore, our blending scheme curtails ringing artefacts post restoration.

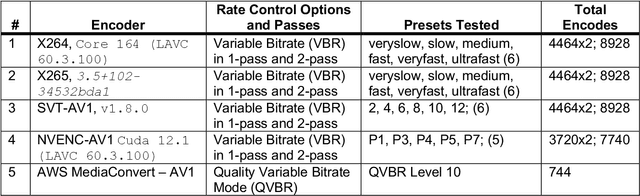

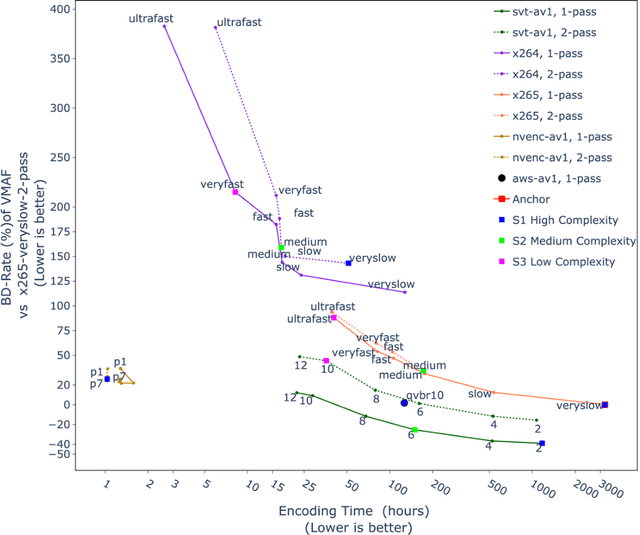

Unravelling the Power of Single-Pass Look-Ahead in Modern Codecs for Optimized Transcoding Deployment

Apr 08, 2024

Abstract:Modern video encoders have evolved into sophisticated pieces of software in which various coding tools interact with each other. In the past, singlepass encoding was not considered for Video-On-Demand (VOD) use cases. In this work, we evaluate production-ready encoders for H.264 (x264), H.265 (HEVC), AV1 (SVT-AV1) along with direct comparisons to the latest AV1 encoder inside NVIDIA GPUs (40 series), and AWS Mediaconvert's AV1 implementation. Our experimental results demonstrate single pass encoding inside modern encoder implementations can give us very good quality at a reasonable compute cost. The results are presented as three different scenarios targeting High, Medium, and Low complexity accounting quality/bitrate/compute load. Finally, a set of recommendations is presented for end-users to help decide which encoder/preset combination might be more suited to their use case.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge