An Fu

Aeroengine performance prediction using a physical-embedded data-driven method

Jun 29, 2024Abstract:Accurate and efficient prediction of aeroengine performance is of paramount importance for engine design, maintenance, and optimization endeavours. However, existing methodologies often struggle to strike an optimal balance among predictive accuracy, computational efficiency, modelling complexity, and data dependency. To address these challenges, we propose a strategy that synergistically combines domain knowledge from both the aeroengine and neural network realms to enable real-time prediction of engine performance parameters. Leveraging aeroengine domain knowledge, we judiciously design the network structure and regulate the internal information flow. Concurrently, drawing upon neural network domain expertise, we devise four distinct feature fusion methods and introduce an innovative loss function formulation. To rigorously evaluate the effectiveness and robustness of our proposed strategy, we conduct comprehensive validation across two distinct datasets. The empirical results demonstrate :(1) the evident advantages of our tailored loss function; (2) our model's ability to maintain equal or superior performance with a reduced parameter count; (3) our model's reduced data dependency compared to generalized neural network architectures; (4)Our model is more interpretable than traditional black box machine learning methods.

PanGu-Coder2: Boosting Large Language Models for Code with Ranking Feedback

Jul 27, 2023

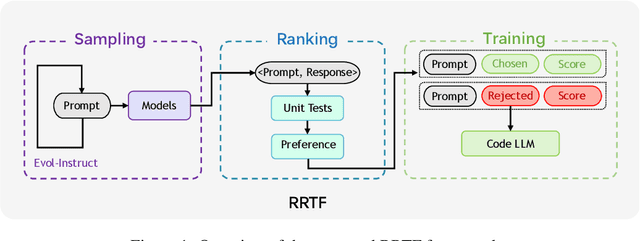

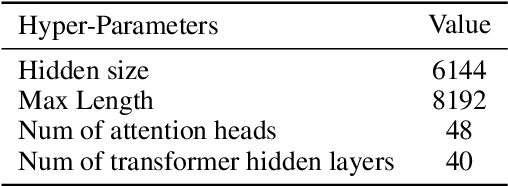

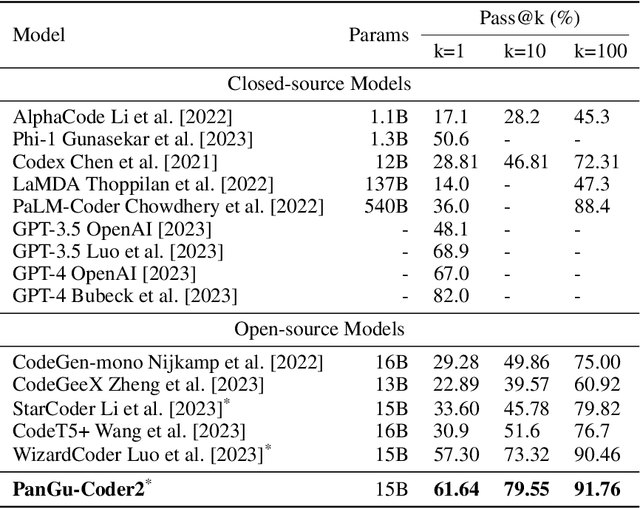

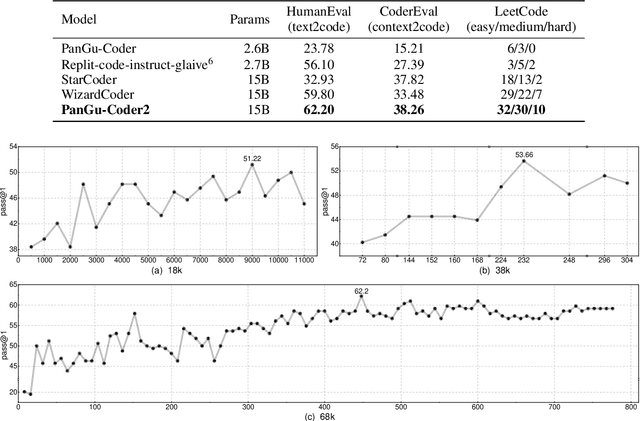

Abstract:Large Language Models for Code (Code LLM) are flourishing. New and powerful models are released on a weekly basis, demonstrating remarkable performance on the code generation task. Various approaches have been proposed to boost the code generation performance of pre-trained Code LLMs, such as supervised fine-tuning, instruction tuning, reinforcement learning, etc. In this paper, we propose a novel RRTF (Rank Responses to align Test&Teacher Feedback) framework, which can effectively and efficiently boost pre-trained large language models for code generation. Under this framework, we present PanGu-Coder2, which achieves 62.20% pass@1 on the OpenAI HumanEval benchmark. Furthermore, through an extensive evaluation on CoderEval and LeetCode benchmarks, we show that PanGu-Coder2 consistently outperforms all previous Code LLMs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge