AmirArsalan Rajabi

Fair Bilevel Neural Network (FairBiNN): On Balancing fairness and accuracy via Stackelberg Equilibrium

Oct 21, 2024

Abstract:The persistent challenge of bias in machine learning models necessitates robust solutions to ensure parity and equal treatment across diverse groups, particularly in classification tasks. Current methods for mitigating bias often result in information loss and an inadequate balance between accuracy and fairness. To address this, we propose a novel methodology grounded in bilevel optimization principles. Our deep learning-based approach concurrently optimizes for both accuracy and fairness objectives, and under certain assumptions, achieving proven Pareto optimal solutions while mitigating bias in the trained model. Theoretical analysis indicates that the upper bound on the loss incurred by this method is less than or equal to the loss of the Lagrangian approach, which involves adding a regularization term to the loss function. We demonstrate the efficacy of our model primarily on tabular datasets such as UCI Adult and Heritage Health. When benchmarked against state-of-the-art fairness methods, our model exhibits superior performance, advancing fairness-aware machine learning solutions and bridging the accuracy-fairness gap. The implementation of FairBiNN is available on https://github.com/yazdanimehdi/FairBiNN.

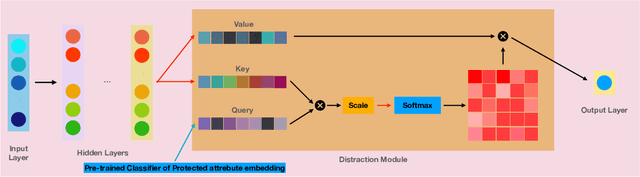

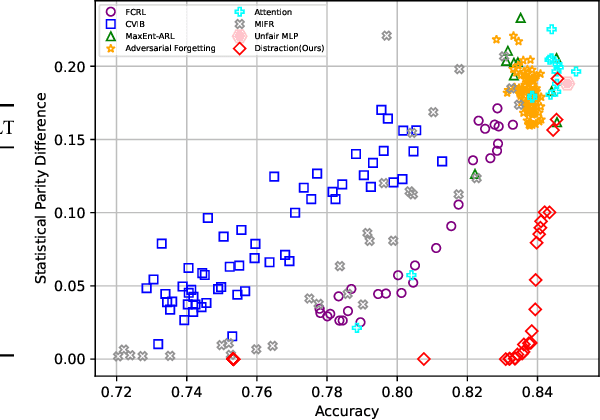

Distraction is All You Need for Fairness

Mar 15, 2022

Abstract:With the recent growth in artificial intelligence models and its expanding role in automated decision making, ensuring that these models are not biased is of vital importance. There is an abundance of evidence suggesting that these models could contain or even amplify the bias present in the data on which they are trained, inherent to their objective function and learning algorithms. In this paper, we propose a novel classification algorithm that improves fairness, while maintaining accuracy of the predictions. Utilizing the embedding layer of a pre-trained classifier for the protected attributes, the network uses an attention layer to distract the classification from depending on the protected attribute in its predictions. We compare our model with six state-of-the-art methodologies proposed in fairness literature, and show that the model is superior to those methods in terms of minimizing bias while maintaining accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge