Alina Ermilova

Revealing Interconnections between Diseases: from Statistical Methods to Large Language Models

Oct 06, 2025Abstract:Identifying disease interconnections through manual analysis of large-scale clinical data is labor-intensive, subjective, and prone to expert disagreement. While machine learning (ML) shows promise, three critical challenges remain: (1) selecting optimal methods from the vast ML landscape, (2) determining whether real-world clinical data (e.g., electronic health records, EHRs) or structured disease descriptions yield more reliable insights, (3) the lack of "ground truth," as some disease interconnections remain unexplored in medicine. Large language models (LLMs) demonstrate broad utility, yet they often lack specialized medical knowledge. To address these gaps, we conduct a systematic evaluation of seven approaches for uncovering disease relationships based on two data sources: (i) sequences of ICD-10 codes from MIMIC-IV EHRs and (ii) the full set of ICD-10 codes, both with and without textual descriptions. Our framework integrates the following: (i) a statistical co-occurrence analysis and a masked language modeling (MLM) approach using real clinical data; (ii) domain-specific BERT variants (Med-BERT and BioClinicalBERT); (iii) a general-purpose BERT and document retrieval; and (iv) four LLMs (Mistral, DeepSeek, Qwen, and YandexGPT). Our graph-based comparison of the obtained interconnection matrices shows that the LLM-based approach produces interconnections with the lowest diversity of ICD code connections to different diseases compared to other methods, including text-based and domain-based approaches. This suggests an important implication: LLMs have limited potential for discovering new interconnections. In the absence of ground truth databases for medical interconnections between ICD codes, our results constitute a valuable medical disease ontology that can serve as a foundational resource for future clinical research and artificial intelligence applications in healthcare.

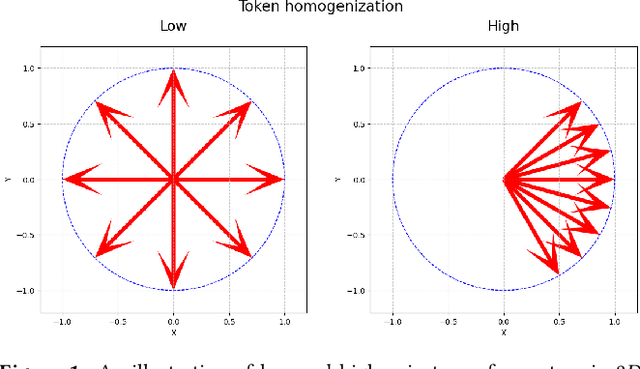

Token Homogenization under Positional Bias

Aug 23, 2025

Abstract:This paper investigates token homogenization - the convergence of token representations toward uniformity across transformer layers and its relationship to positional bias in large language models. We empirically examine whether homogenization occurs and how positional bias amplifies this effect. Through layer-wise similarity analysis and controlled experiments, we demonstrate that tokens systematically lose distinctiveness during processing, particularly when biased toward extremal positions. Our findings confirm both the existence of homogenization and its dependence on positional attention mechanisms.

Hallucination Detection in LLMs via Topological Divergence on Attention Graphs

Apr 14, 2025Abstract:Hallucination, i.e., generating factually incorrect content, remains a critical challenge for large language models (LLMs). We introduce TOHA, a TOpology-based HAllucination detector in the RAG setting, which leverages a topological divergence metric to quantify the structural properties of graphs induced by attention matrices. Examining the topological divergence between prompt and response subgraphs reveals consistent patterns: higher divergence values in specific attention heads correlate with hallucinated outputs, independent of the dataset. Extensive experiments, including evaluation on question answering and data-to-text tasks, show that our approach achieves state-of-the-art or competitive results on several benchmarks, two of which were annotated by us and are being publicly released to facilitate further research. Beyond its strong in-domain performance, TOHA maintains remarkable domain transferability across multiple open-source LLMs. Our findings suggest that analyzing the topological structure of attention matrices can serve as an efficient and robust indicator of factual reliability in LLMs.

Hiding Backdoors within Event Sequence Data via Poisoning Attacks

Aug 20, 2023Abstract:The financial industry relies on deep learning models for making important decisions. This adoption brings new danger, as deep black-box models are known to be vulnerable to adversarial attacks. In computer vision, one can shape the output during inference by performing an adversarial attack called poisoning via introducing a backdoor into the model during training. For sequences of financial transactions of a customer, insertion of a backdoor is harder to perform, as models operate over a more complex discrete space of sequences, and systematic checks for insecurities occur. We provide a method to introduce concealed backdoors, creating vulnerabilities without altering their functionality for uncontaminated data. To achieve this, we replace a clean model with a poisoned one that is aware of the availability of a backdoor and utilize this knowledge. Our most difficult for uncovering attacks include either additional supervised detection step of poisoned data activated during the test or well-hidden model weight modifications. The experimental study provides insights into how these effects vary across different datasets, architectures, and model components. Alternative methods and baselines, such as distillation-type regularization, are also explored but found to be less efficient. Conducted on three open transaction datasets and architectures, including LSTM, CNN, and Transformer, our findings not only illuminate the vulnerabilities in contemporary models but also can drive the construction of more robust systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge