Alexandre S. Simões

InstructRobot: A Model-Free Framework for Mapping Natural Language Instructions into Robot Motion

Feb 18, 2025Abstract:The ability to communicate with robots using natural language is a significant step forward in human-robot interaction. However, accurately translating verbal commands into physical actions is promising, but still presents challenges. Current approaches require large datasets to train the models and are limited to robots with a maximum of 6 degrees of freedom. To address these issues, we propose a framework called InstructRobot that maps natural language instructions into robot motion without requiring the construction of large datasets or prior knowledge of the robot's kinematics model. InstructRobot employs a reinforcement learning algorithm that enables joint learning of language representations and inverse kinematics model, simplifying the entire learning process. The proposed framework is validated using a complex robot with 26 revolute joints in object manipulation tasks, demonstrating its robustness and adaptability in realistic environments. The framework can be applied to any task or domain where datasets are scarce and difficult to create, making it an intuitive and accessible solution to the challenges of training robots using linguistic communication. Open source code for the InstructRobot framework and experiments can be accessed at https://github.com/icleveston/InstructRobot.

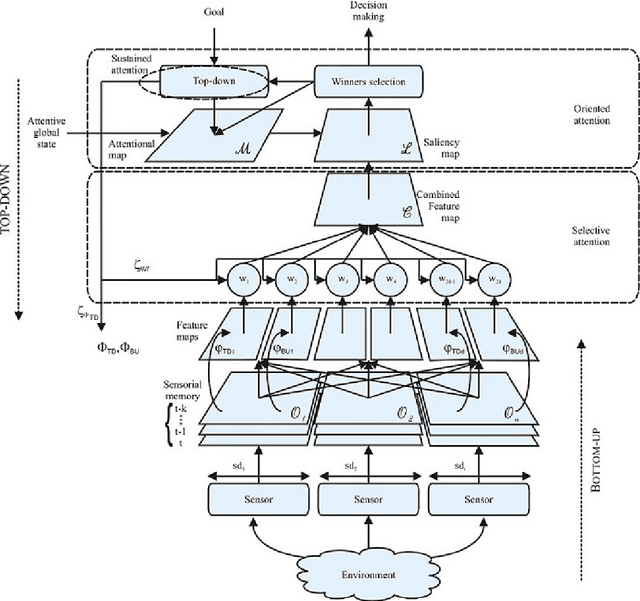

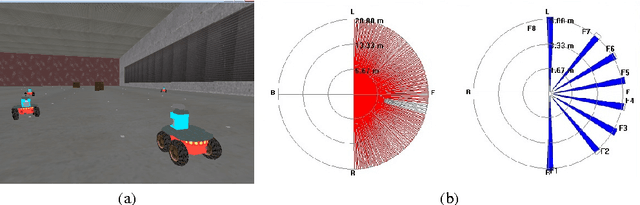

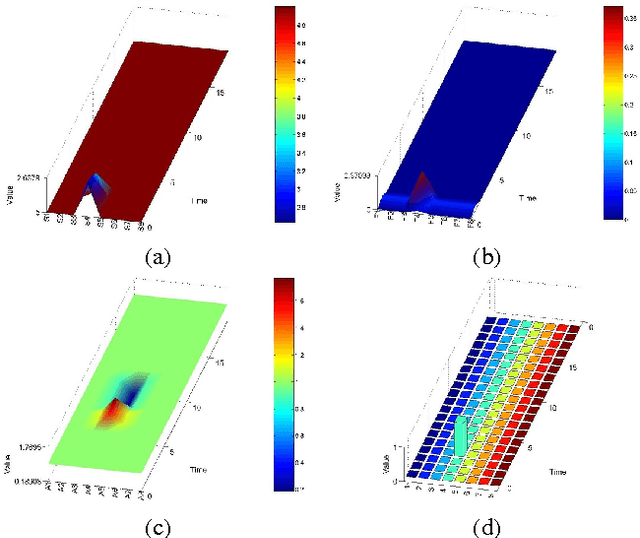

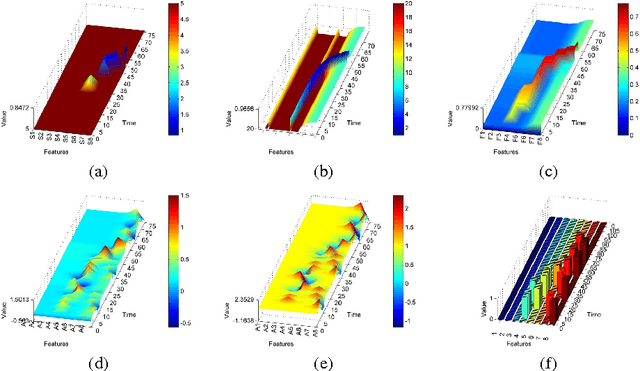

Top-down and Bottom-up Feature Combination for Multi-sensor Attentive Robots

Jul 22, 2013

Abstract:The information available to robots in real tasks is widely distributed both in time and space, requiring the agent to search for relevant data. In humans, that face the same problem when sounds, images and smells are presented to their sensors in a daily scene, a natural system is applied: Attention. As vision plays an important role in our routine, most research regarding attention has involved this sensorial system and the same has been replicated to the robotics field. However,most of the robotics tasks nowadays do not rely only in visual data, that are still costly. To allow the use of attentive concepts with other robotics sensors that are usually used in tasks such as navigation, self-localization, searching and mapping, a generic attentional model has been previously proposed. In this work, feature mapping functions were designed to build feature maps to this attentive model from data from range scanner and sonar sensors. Experiments were performed in a high fidelity simulated robotics environment and results have demonstrated the capability of the model on dealing with both salient stimuli and goal-driven attention over multiple features extracted from multiple sensors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge