Alexander Friedrich

SCoT: Sense Clustering over Time: a tool for the analysis of lexical change

Mar 18, 2022

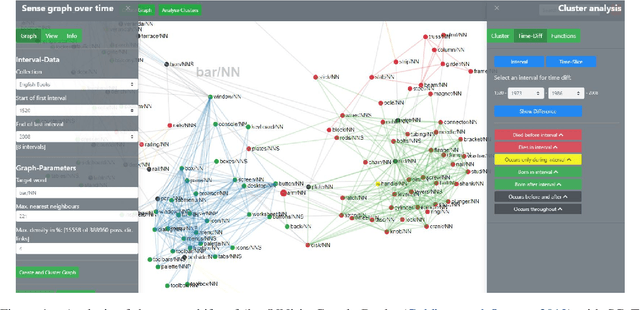

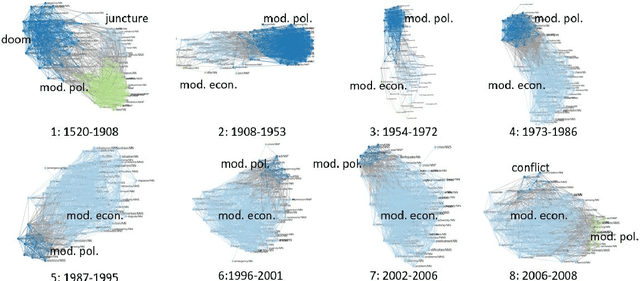

Abstract:We present Sense Clustering over Time (SCoT), a novel network-based tool for analysing lexical change. SCoT represents the meanings of a word as clusters of similar words. It visualises their formation, change, and demise. There are two main approaches to the exploration of dynamic networks: the discrete one compares a series of clustered graphs from separate points in time. The continuous one analyses the changes of one dynamic network over a time-span. SCoT offers a new hybrid solution. First, it aggregates time-stamped documents into intervals and calculates one sense graph per discrete interval. Then, it merges the static graphs to a new type of dynamic semantic neighbourhood graph over time. The resulting sense clusters offer uniquely detailed insights into lexical change over continuous intervals with model transparency and provenance. SCoT has been successfully used in a European study on the changing meaning of `crisis'.

* Update of https://aclanthology.org/2021.eacl-demos.23/

Embodied Neuromorphic Vision with Event-Driven Random Backpropagation

May 06, 2019

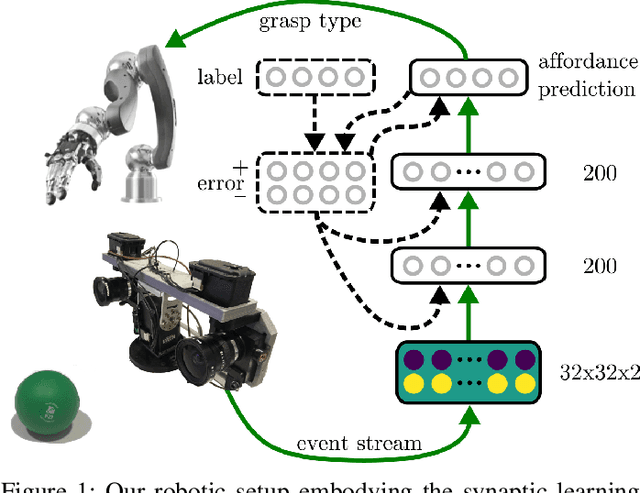

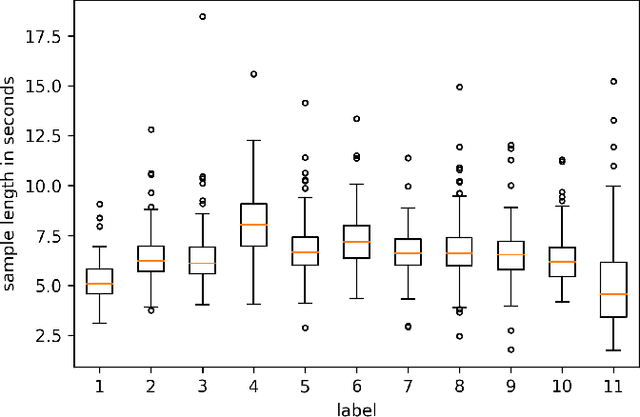

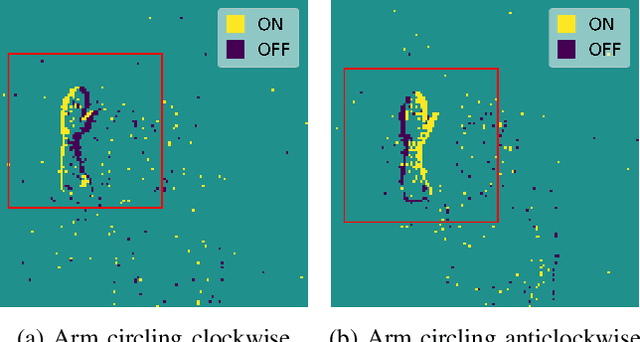

Abstract:Spike-based communication between biological neurons is sparse and unreliable. This enables the brain to process visual information from the eyes efficiently. Taking inspiration from biology, artificial spiking neural networks coupled with silicon retinas attempt to model these computations. Recent findings in machine learning allowed the derivation of a family of powerful synaptic plasticity rules approximating backpropagation for spiking networks. Are these rules capable of processing real-world visual sensory data? In this paper, we evaluate the performance of Event-Driven Random Back-Propagation (eRBP) at learning representations from event streams provided by a Dynamic Vision Sensor (DVS). First, we show that eRBP matches state-of-the-art performance on the DvsGesture dataset with the addition of a simple covert attention mechanism. By remapping visual receptive fields relatively to the center of the motion, this attention mechanism provides translation invariance at low computational cost compared to convolutions. Second, we successfully integrate eRBP in a real robotic setup, where a robotic arm grasps objects according to detected visual affordances. In this setup, visual information is actively sensed by a DVS mounted on a robotic head performing microsaccadic eye movements. We show that our method classifies affordances within 100ms after microsaccade onset, which is comparable to human performance reported in behavioral study. Our results suggest that advances in neuromorphic technology and plasticity rules enable the development of autonomous robots operating at high speed and low energy consumption.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge