Alessio Montuoro

Data Distribution Shifts in (Industrial) Federated Learning as a Privacy Issue

Sep 20, 2024

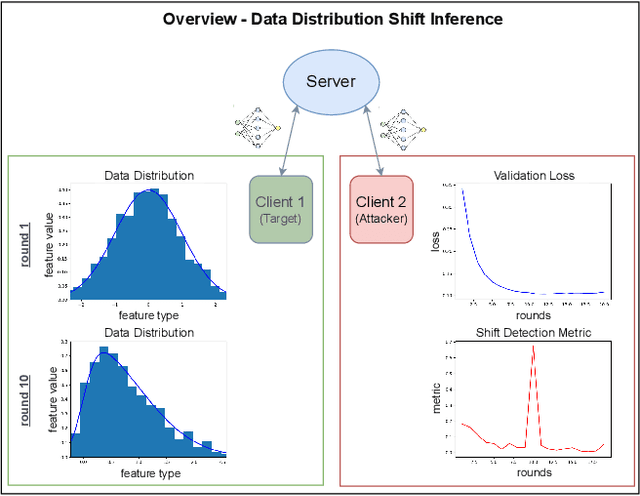

Abstract:We consider industrial federated learning, a collaboration between a small number of powerful, potentially competing industrial players, mediated by a third party aspiring to improve the service it provides to its customers. We argue that this configuration harbours covert privacy risks that do not arise in e.g. cross-device settings. Companies are very protective of their intellectual property and production processes. Information about changes to their production and the timing of which is to be kept private. We study a scenario in which one of the collaborators infers changes to their competitors' production by detecting potentially subtle temporal data distribution shifts. In this framing, a data distribution shift is always problematic, even if it has no negative effect on training convergence. Thus, our goal is to find means that allow the detection of distributional shifts better than customary evaluation metrics. Based on the assumption that even minor shifts translate into the collaboratively learned machine learning model, the attacker tracks the shared models' internal state with a selection of metrics from literature in order to pick up on relevant changes. In an empirical study on benchmark datasets, we show an honest-but-curious attacker to be capable of detecting subtle distributional shifts on other clients, in some cases long before they become obvious in evaluation.

HE-MAN -- Homomorphically Encrypted MAchine learning with oNnx models

Feb 16, 2023

Abstract:Machine learning (ML) algorithms are increasingly important for the success of products and services, especially considering the growing amount and availability of data. This also holds for areas handling sensitive data, e.g. applications processing medical data or facial images. However, people are reluctant to pass their personal sensitive data to a ML service provider. At the same time, service providers have a strong interest in protecting their intellectual property and therefore refrain from publicly sharing their ML model. Fully homomorphic encryption (FHE) is a promising technique to enable individuals using ML services without giving up privacy and protecting the ML model of service providers at the same time. Despite steady improvements, FHE is still hardly integrated in today's ML applications. We introduce HE-MAN, an open-source two-party machine learning toolset for privacy preserving inference with ONNX models and homomorphically encrypted data. Both the model and the input data do not have to be disclosed. HE-MAN abstracts cryptographic details away from the users, thus expertise in FHE is not required for either party. HE-MAN 's security relies on its underlying FHE schemes. For now, we integrate two different homomorphic encryption schemes, namely Concrete and TenSEAL. Compared to prior work, HE-MAN supports a broad range of ML models in ONNX format out of the box without sacrificing accuracy. We evaluate the performance of our implementation on different network architectures classifying handwritten digits and performing face recognition and report accuracy and latency of the homomorphically encrypted inference. Cryptographic parameters are automatically derived by the tools. We show that the accuracy of HE-MAN is on par with models using plaintext input while inference latency is several orders of magnitude higher compared to the plaintext case.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge