Data Distribution Shifts in (Industrial) Federated Learning as a Privacy Issue

Paper and Code

Sep 20, 2024

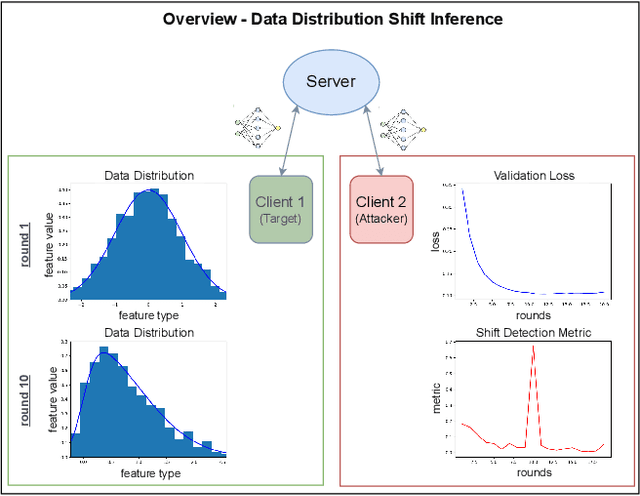

We consider industrial federated learning, a collaboration between a small number of powerful, potentially competing industrial players, mediated by a third party aspiring to improve the service it provides to its customers. We argue that this configuration harbours covert privacy risks that do not arise in e.g. cross-device settings. Companies are very protective of their intellectual property and production processes. Information about changes to their production and the timing of which is to be kept private. We study a scenario in which one of the collaborators infers changes to their competitors' production by detecting potentially subtle temporal data distribution shifts. In this framing, a data distribution shift is always problematic, even if it has no negative effect on training convergence. Thus, our goal is to find means that allow the detection of distributional shifts better than customary evaluation metrics. Based on the assumption that even minor shifts translate into the collaboratively learned machine learning model, the attacker tracks the shared models' internal state with a selection of metrics from literature in order to pick up on relevant changes. In an empirical study on benchmark datasets, we show an honest-but-curious attacker to be capable of detecting subtle distributional shifts on other clients, in some cases long before they become obvious in evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge