Aina Ferrà

A topological classifier to characterize brain states: When shape matters more than variance

Mar 07, 2023

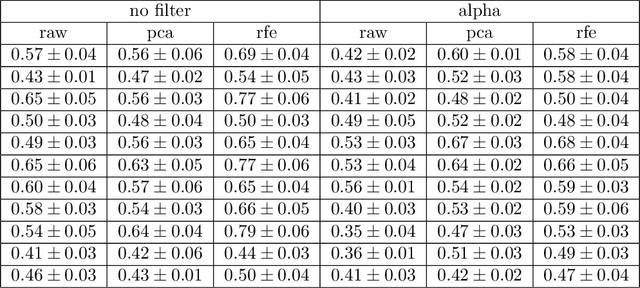

Abstract:Despite the remarkable accuracies attained by machine learning classifiers to separate complex datasets in a supervised fashion, most of their operation falls short to provide an informed intuition about the structure of data, and, what is more important, about the phenomena being characterized by the given datasets. By contrast, topological data analysis (TDA) is devoted to study the shape of data clouds by means of persistence descriptors and provides a quantitative characterization of specific topological features of the dataset under scrutiny. In this article we introduce a novel TDA-based classifier that works on the principle of assessing quantifiable changes on topological metrics caused by the addition of new input to a subset of data. We used this classifier with a high-dimensional electro-encephalographic (EEG) dataset recorded from eleven participants during a decision-making experiment in which three motivational states were induced through a manipulation of social pressure. After processing a band-pass filtered version of EEG signals, we calculated silhouettes from persistence diagrams associated with each motivated state, and classified unlabeled signals according to their impact on each reference silhouette. Our results show that in addition to providing accuracies within the range of those of a nearest neighbour classifier, the TDA classifier provides formal intuition of the structure of the dataset as well as an estimate of its intrinsic dimension. Towards this end, we incorporated dimensionality reduction methods to our procedure and found that the accuracy of our TDA classifier is generally not sensitive to explained variance but rather to shape, contrary to what happens with most machine learning classifiers.

Importance attribution in neural networks by means of persistence landscapes of time series

Feb 06, 2023Abstract:We propose and implement a method to analyze time series with a neural network using a matrix of area-normalized persistence landscapes obtained through topological data analysis. We include a gating layer in the network's architecture that is able to identify the most relevant landscape levels for the classification task, thus working as an importance attribution system. Next, we perform a matching between the selected landscape functions and the corresponding critical points of the original time series. From this matching we are able to reconstruct an approximate shape of the time series that gives insight into the classification decision. We test this technique with input data from a dataset of electrocardiographic signals.

Reconstruction of univariate functions from directional persistence diagrams

Mar 03, 2022

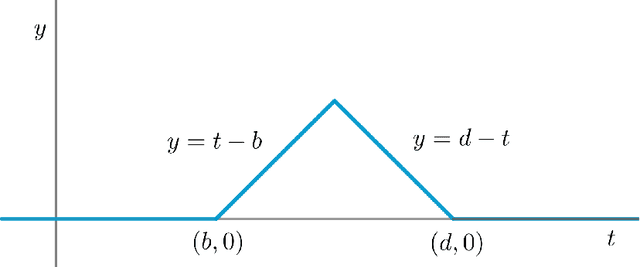

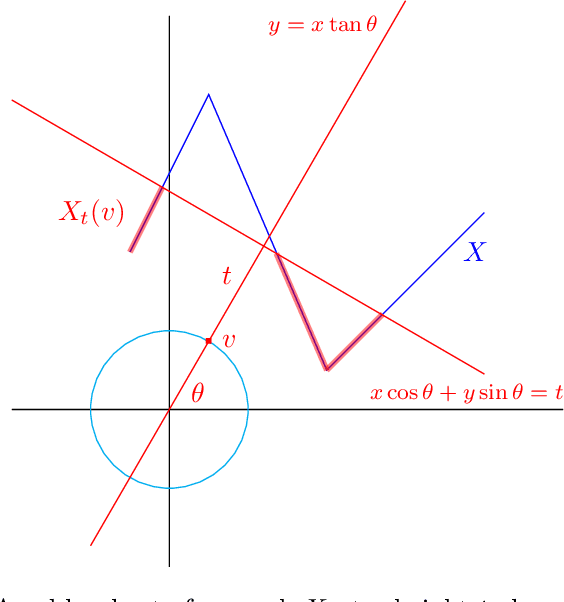

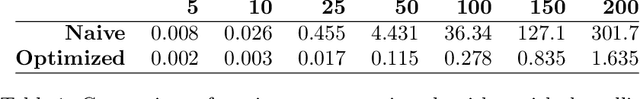

Abstract:We describe a method for approximating a single-variable function $f$ using persistence diagrams of sublevel sets of $f$ from height functions in different directions. We provide algorithms for the piecewise linear case and for the smooth case. Three directions suffice to locate all local maxima and minima of a piecewise linear continuous function from its collection of directional persistence diagrams, while five directions are needed in the case of smooth functions with non-degenerate critical points. Our approximation of functions by means of persistence diagrams is motivated by a study of importance attribution in machine learning, where one seeks to reduce the number of critical points of signal functions without a significant loss of information for a neural network classifier.

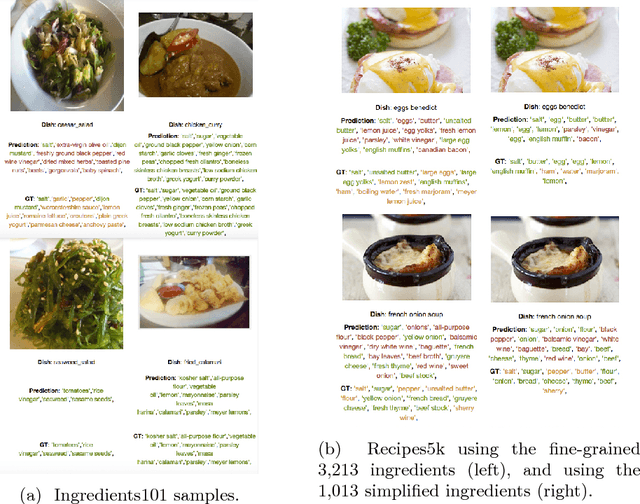

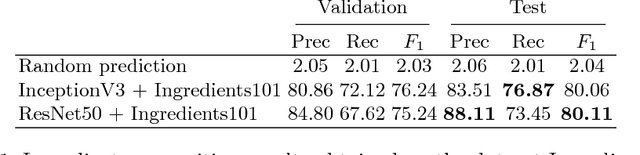

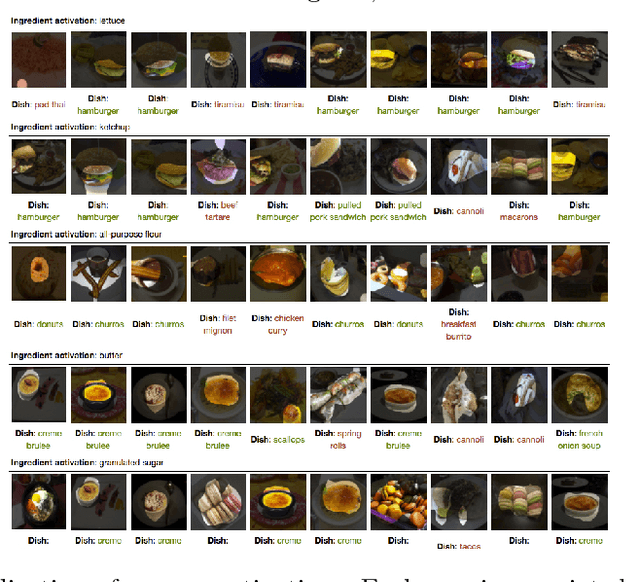

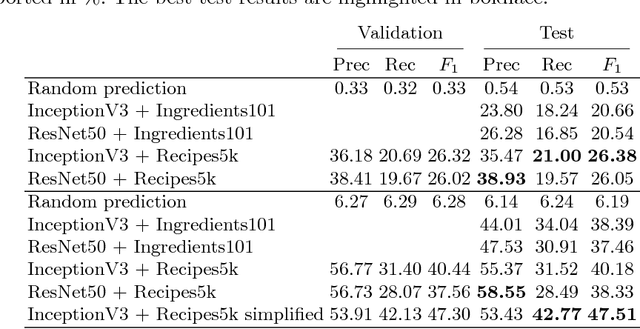

Food Ingredients Recognition through Multi-label Learning

Jul 27, 2017

Abstract:Automatically constructing a food diary that tracks the ingredients consumed can help people follow a healthy diet. We tackle the problem of food ingredients recognition as a multi-label learning problem. We propose a method for adapting a highly performing state of the art CNN in order to act as a multi-label predictor for learning recipes in terms of their list of ingredients. We prove that our model is able to, given a picture, predict its list of ingredients, even if the recipe corresponding to the picture has never been seen by the model. We make public two new datasets suitable for this purpose. Furthermore, we prove that a model trained with a high variability of recipes and ingredients is able to generalize better on new data, and visualize how it specializes each of its neurons to different ingredients.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge