Zero-shot object goal visual navigation

Paper and Code

Jun 15, 2022

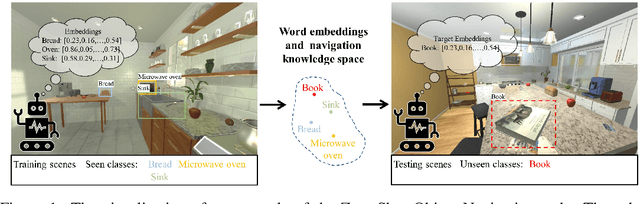

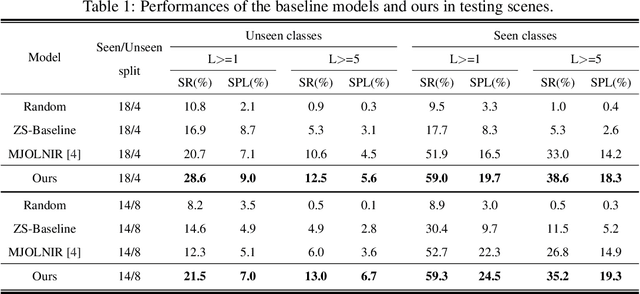

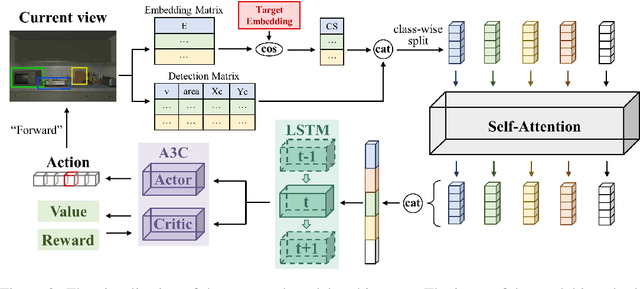

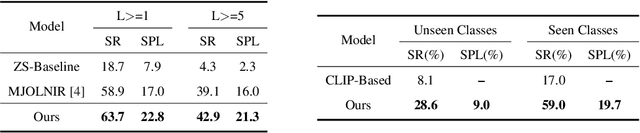

Object goal visual navigation is a challenging task that aims to guide a robot to find the target object only based on its visual observation, and the target is limited to the classes specified in the training stage. However, in real households, there may exist numerous object classes that the robot needs to deal with, and it is hard for all of these classes to be contained in the training stage. To address this challenge, we propose a zero-shot object navigation task by combining zero-shot learning with object goal visual navigation, which aims at guiding robots to find objects belonging to novel classes without any training samples. This task gives rise to the need to generalize the learned policy to novel classes, which is a less addressed issue of object navigation using deep reinforcement learning. To address this issue, we utilize "class-unrelated" data as input to alleviate the overfitting of the classes specified in the training stage. The class-unrelated input consists of detection results and cosine similarity of word embeddings, and does not contain any class-related visual features or knowledge graphs. Extensive experiments on the AI2-THOR platform show that our model outperforms the baseline models in both seen and unseen classes, which proves that our model is less class-sensitive and generalizes better. Our code is available at https://github.com/pioneer-innovation/Zero-Shot-Object-Navigation

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge