What should I Ask: A Knowledge-driven Approach for Follow-up Questions Generation in Conversational Surveys

Paper and Code

May 23, 2022

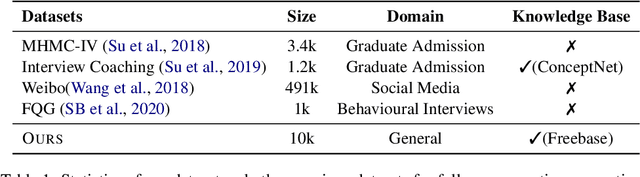

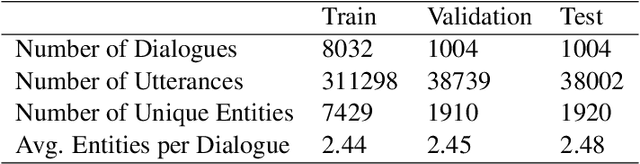

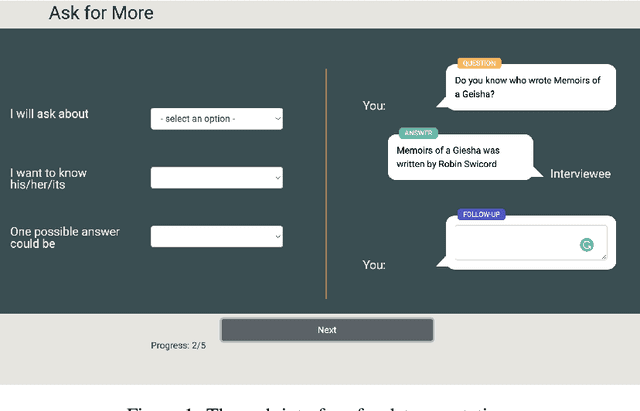

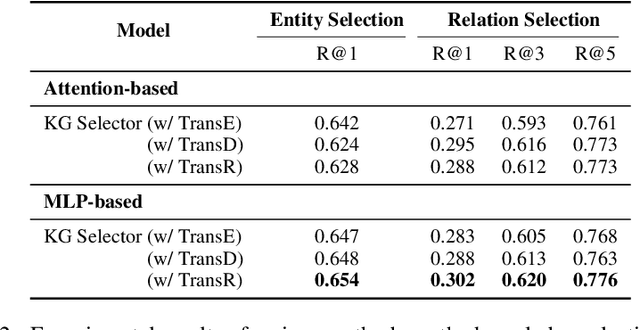

Conversational surveys, where an agent asks open-ended questions through natural language interfaces, offer a new way to collect information from people. A good follow-up question in a conversational survey prompts high-quality information and delivers engaging experiences. However, generating high-quality follow-up questions on the fly is a non-trivial task. The agent needs to understand the diverse and complex participant responses, adhere to the survey goal, and generate clear and coherent questions. In this study, we propose a knowledge-driven follow-up question generation framework. The framework combines a knowledge selection module to identify salient topics in participants' responses and a generative model guided by selected knowledge entity-relation pairs. To investigate the effectiveness of the proposed framework, we build a new dataset for open-domain follow-up question generation and present a new set of reference-free evaluation metrics based on Gricean Maxim. Our experiments demonstrate that our framework outperforms a GPT-based baseline in both objective evaluation and human-expert evaluation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge