What Do We Really Need? Degenerating U-Net on Retinal Vessel Segmentation

Paper and Code

Nov 06, 2019

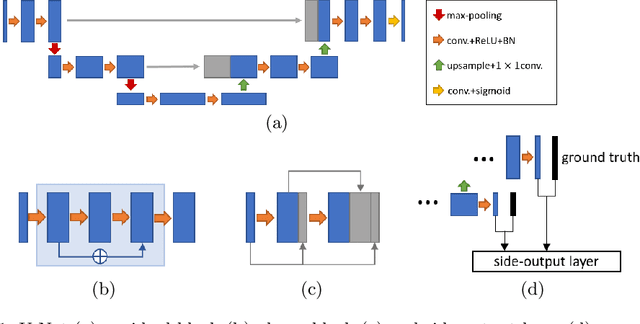

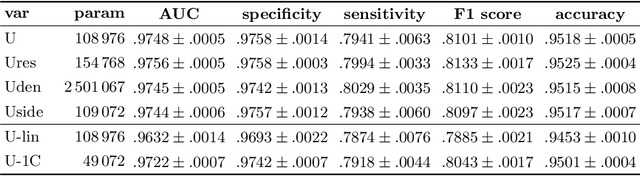

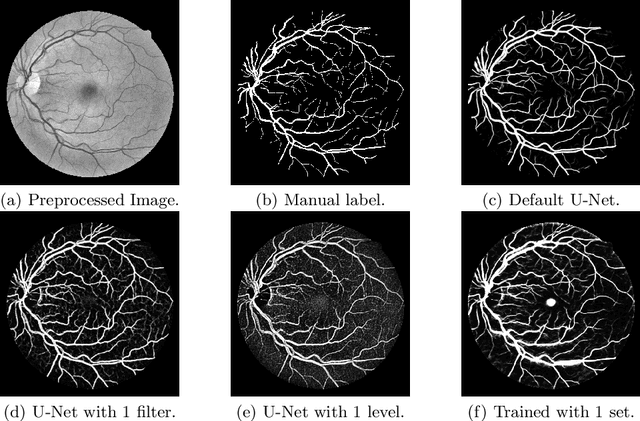

Retinal vessel segmentation is an essential step for fundus image analysis. With the recent advances of deep learning technologies, many convolutional neural networks have been applied in this field, including the successful U-Net. In this work, we firstly modify the U-Net with functional blocks aiming to pursue higher performance. The absence of the expected performance boost then lead us to dig into the opposite direction of shrinking the U-Net and exploring the extreme conditions such that its segmentation performance is maintained. Experiment series to simplify the network structure, reduce the network size and restrict the training conditions are designed. Results show that for retinal vessel segmentation on DRIVE database, U-Net does not degenerate until surprisingly acute conditions: one level, one filter in convolutional layers, and one training sample. This experimental discovery is both counter-intuitive and worthwhile. Not only are the extremes of the U-Net explored on a well-studied application, but also one intriguing warning is raised for the research methodology which seeks for marginal performance enhancement regardless of the resource cost.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge