VIPriors 1: Visual Inductive Priors for Data-Efficient Deep Learning Challenges

Paper and Code

Mar 05, 2021

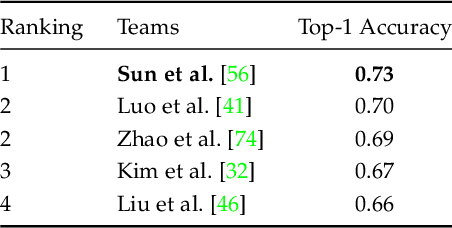

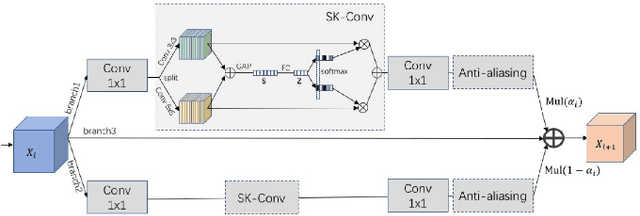

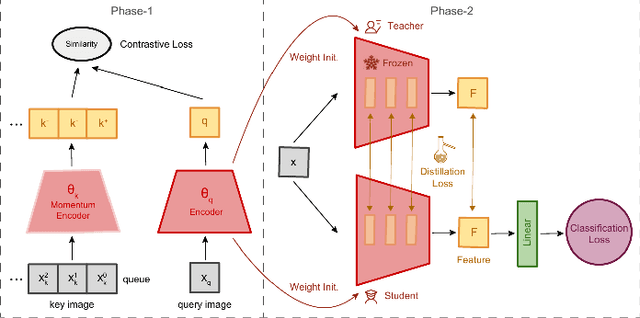

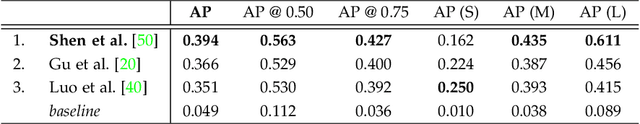

We present the first edition of "VIPriors: Visual Inductive Priors for Data-Efficient Deep Learning" challenges. We offer four data-impaired challenges, where models are trained from scratch, and we reduce the number of training samples to a fraction of the full set. Furthermore, to encourage data efficient solutions, we prohibited the use of pre-trained models and other transfer learning techniques. The majority of top ranking solutions make heavy use of data augmentation, model ensembling, and novel and efficient network architectures to achieve significant performance increases compared to the provided baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge