Video-Based Action Recognition Using Rate-Invariant Analysis of Covariance Trajectories

Paper and Code

Apr 09, 2015

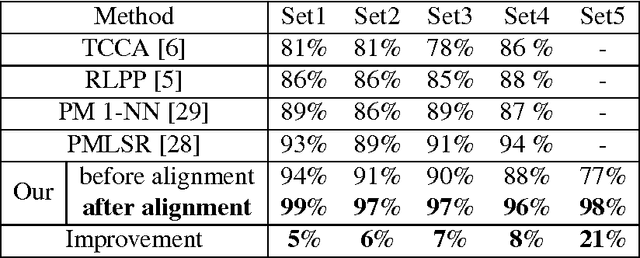

Statistical classification of actions in videos is mostly performed by extracting relevant features, particularly covariance features, from image frames and studying time series associated with temporal evolutions of these features. A natural mathematical representation of activity videos is in form of parameterized trajectories on the covariance manifold, i.e. the set of symmetric, positive-definite matrices (SPDMs). The variable execution-rates of actions implies variable parameterizations of the resulting trajectories, and complicates their classification. Since action classes are invariant to execution rates, one requires rate-invariant metrics for comparing trajectories. A recent paper represented trajectories using their transported square-root vector fields (TSRVFs), defined by parallel translating scaled-velocity vectors of trajectories to a reference tangent space on the manifold. To avoid arbitrariness of selecting the reference and to reduce distortion introduced during this mapping, we develop a purely intrinsic approach where SPDM trajectories are represented by redefining their TSRVFs at the starting points of the trajectories, and analyzed as elements of a vector bundle on the manifold. Using a natural Riemannain metric on vector bundles of SPDMs, we compute geodesic paths and geodesic distances between trajectories in the quotient space of this vector bundle, with respect to the re-parameterization group. This makes the resulting comparison of trajectories invariant to their re-parameterization. We demonstrate this framework on two applications involving video classification: visual speech recognition or lip-reading and hand-gesture recognition. In both cases we achieve results either comparable to or better than the current literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge