Variational Inference of overparameterized Bayesian Neural Networks: a theoretical and empirical study

Paper and Code

Jul 08, 2022

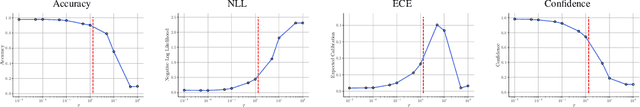

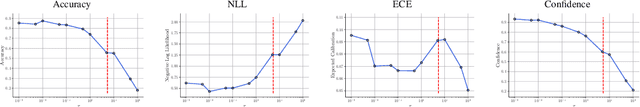

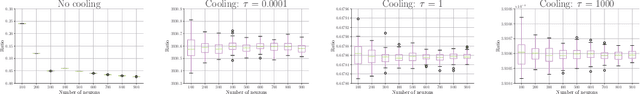

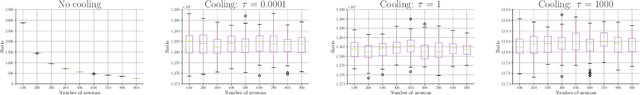

This paper studies the Variational Inference (VI) used for training Bayesian Neural Networks (BNN) in the overparameterized regime, i.e., when the number of neurons tends to infinity. More specifically, we consider overparameterized two-layer BNN and point out a critical issue in the mean-field VI training. This problem arises from the decomposition of the lower bound on the evidence (ELBO) into two terms: one corresponding to the likelihood function of the model and the second to the Kullback-Leibler (KL) divergence between the prior distribution and the variational posterior. In particular, we show both theoretically and empirically that there is a trade-off between these two terms in the overparameterized regime only when the KL is appropriately re-scaled with respect to the ratio between the the number of observations and neurons. We also illustrate our theoretical results with numerical experiments that highlight the critical choice of this ratio.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge