Value Activation for Bias Alleviation: Generalized-activated Deep Double Deterministic Policy Gradients

Paper and Code

Dec 21, 2021

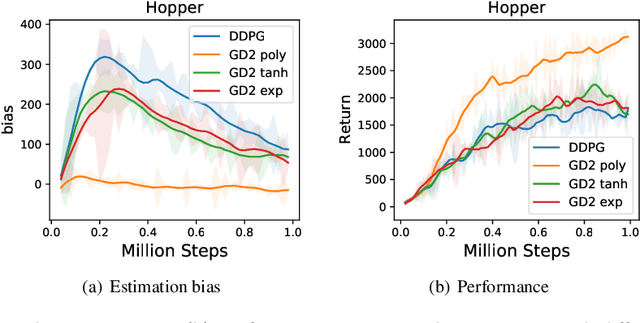

It is vital to accurately estimate the value function in Deep Reinforcement Learning (DRL) such that the agent could execute proper actions instead of suboptimal ones. However, existing actor-critic methods suffer more or less from underestimation bias or overestimation bias, which negatively affect their performance. In this paper, we reveal a simple but effective principle: proper value correction benefits bias alleviation, where we propose the generalized-activated weighting operator that uses any non-decreasing function, namely activation function, as weights for better value estimation. Particularly, we integrate the generalized-activated weighting operator into value estimation and introduce a novel algorithm, Generalized-activated Deep Double Deterministic Policy Gradients (GD3). We theoretically show that GD3 is capable of alleviating the potential estimation bias. We interestingly find that simple activation functions lead to satisfying performance with no additional tricks, and could contribute to faster convergence. Experimental results on numerous challenging continuous control tasks show that GD3 with task-specific activation outperforms the common baseline methods. We also uncover a fact that fine-tuning the polynomial activation function achieves superior results on most of the tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge