VACL: Variance-Aware Cross-Layer Regularization for Pruning Deep Residual Networks

Paper and Code

Sep 10, 2019

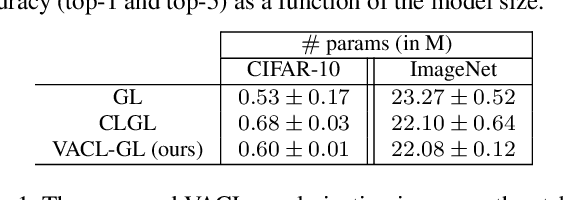

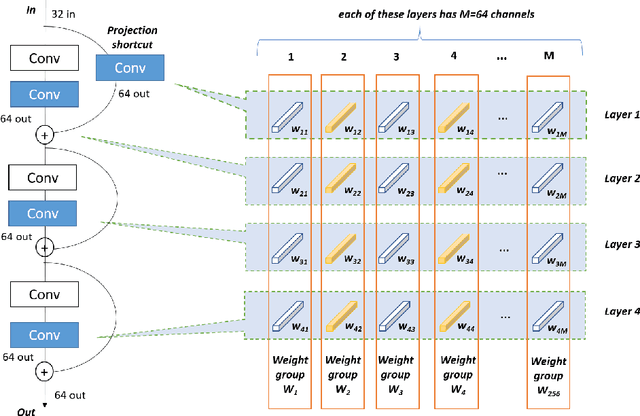

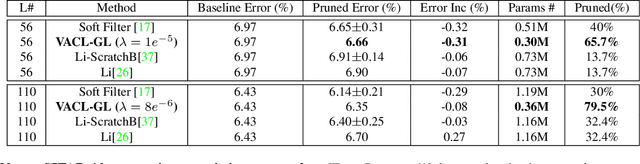

Improving weight sparsity is a common strategy for producing light-weight deep neural networks. However, pruning models with residual learning is more challenging. In this paper, we introduce Variance-Aware Cross-Layer (VACL), a novel approach to address this problem. VACL consists of two parts, a Cross-Layer grouping and a Variance Aware regularization. In Cross-Layer grouping the $i^{th}$ filters of layers connected by skip-connections are grouped into one regularization group. Then, the Variance-Aware regularization term takes into account both the first and second-order statistics of the connected layers to constrain the variance within a group. Our approach can effectively improve the structural sparsity of residual models. For CIFAR10, the proposed method reduces a ResNet model by up to 79.5% with no accuracy drop and reduces a ResNeXt model by up to 82% with less than 1% accuracy drop. For ImageNet, it yields a pruned ratio of up to 63.3% with less than 1% top-5 accuracy drop. Our experimental results show that the proposed approach significantly outperforms other state-of-the-art methods in terms of overall model size and accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge