V2W-BERT: A Framework for Effective Hierarchical Multiclass Classification of Software Vulnerabilities

Paper and Code

Feb 23, 2021

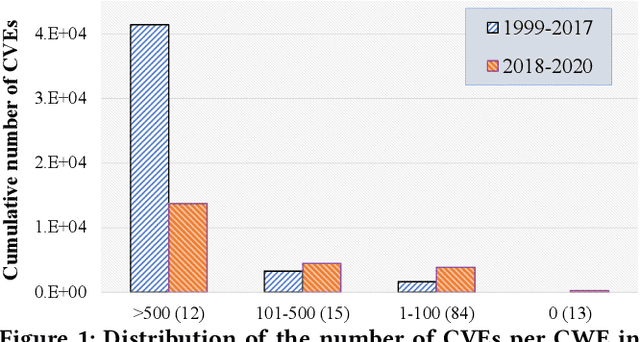

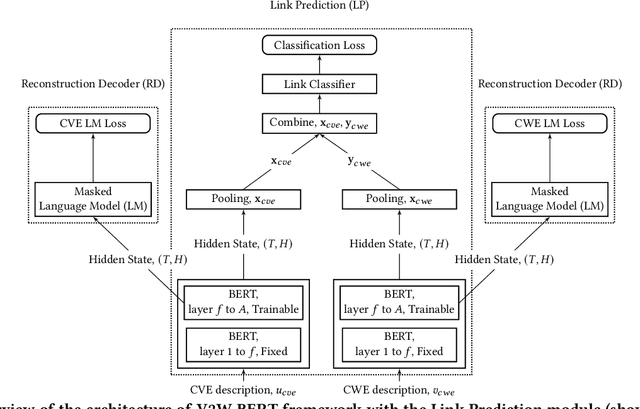

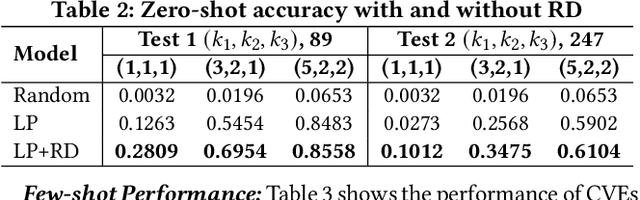

Weaknesses in computer systems such as faults, bugs and errors in the architecture, design or implementation of software provide vulnerabilities that can be exploited by attackers to compromise the security of a system. Common Weakness Enumerations (CWE) are a hierarchically designed dictionary of software weaknesses that provide a means to understand software flaws, potential impact of their exploitation, and means to mitigate these flaws. Common Vulnerabilities and Exposures (CVE) are brief low-level descriptions that uniquely identify vulnerabilities in a specific product or protocol. Classifying or mapping of CVEs to CWEs provides a means to understand the impact and mitigate the vulnerabilities. Since manual mapping of CVEs is not a viable option, automated approaches are desirable but challenging. We present a novel Transformer-based learning framework (V2W-BERT) in this paper. By using ideas from natural language processing, link prediction and transfer learning, our method outperforms previous approaches not only for CWE instances with abundant data to train, but also rare CWE classes with little or no data to train. Our approach also shows significant improvements in using historical data to predict links for future instances of CVEs, and therefore, provides a viable approach for practical applications. Using data from MITRE and National Vulnerability Database, we achieve up to 97% prediction accuracy for randomly partitioned data and up to 94% prediction accuracy in temporally partitioned data. We believe that our work will influence the design of better methods and training models, as well as applications to solve increasingly harder problems in cybersecurity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge