Utterance Rewriting with Contrastive Learning in Multi-turn Dialogue

Paper and Code

Mar 22, 2022

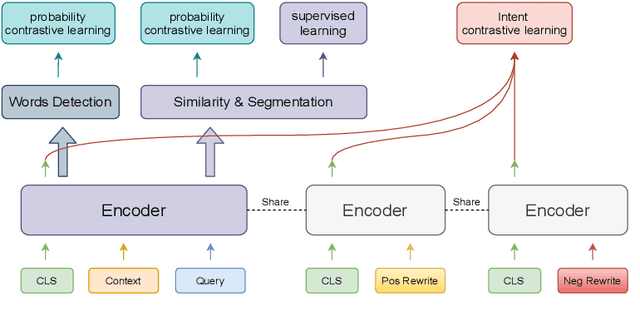

Context modeling plays a significant role in building multi-turn dialogue systems. In order to make full use of context information, systems can use Incomplete Utterance Rewriting(IUR) methods to simplify the multi-turn dialogue into single-turn by merging current utterance and context information into a self-contained utterance. However, previous approaches ignore the intent consistency between the original query and rewritten query. The detection of omitted or coreferred locations in the original query can be further improved. In this paper, we introduce contrastive learning and multi-task learning to jointly model the problem. Our method benefits from carefully designed self-supervised objectives, which act as auxiliary tasks to capture semantics at both sentence-level and token-level. The experiments show that our proposed model achieves state-of-the-art performance on several public datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge