Unsupervised Multimodal 3D Medical Image Registration with Multilevel Correlation Balanced Optimization

Paper and Code

Sep 08, 2024

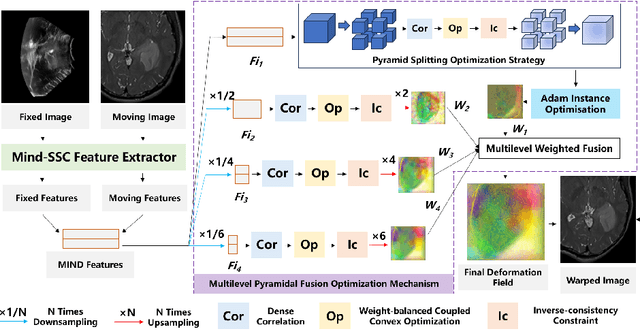

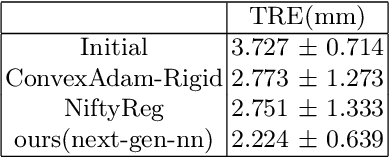

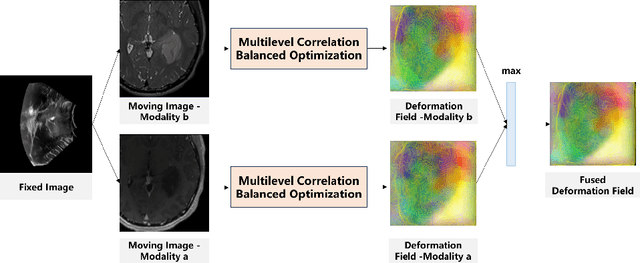

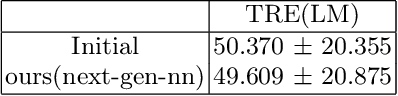

Surgical navigation based on multimodal image registration has played a significant role in providing intraoperative guidance to surgeons by showing the relative position of the target area to critical anatomical structures during surgery. However, due to the differences between multimodal images and intraoperative image deformation caused by tissue displacement and removal during the surgery, effective registration of preoperative and intraoperative multimodal images faces significant challenges. To address the multimodal image registration challenges in Learn2Reg 2024, an unsupervised multimodal medical image registration method based on multilevel correlation balanced optimization (MCBO) is designed to solve these problems. First, the features of each modality are extracted based on the modality independent neighborhood descriptor, and the multimodal images is mapped to the feature space. Second, a multilevel pyramidal fusion optimization mechanism is designed to achieve global optimization and local detail complementation of the deformation field through dense correlation analysis and weight-balanced coupled convex optimization for input features at different scales. For preoperative medical images in different modalities, the alignment and stacking of valid information between different modalities is achieved by the maximum fusion between deformation fields. Our method focuses on the ReMIND2Reg task in Learn2Reg 2024, and to verify the generality of the method, we also tested it on the COMULIS3DCLEM task. Based on the results, our method achieved second place in the validation of both two tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge