Unitary Approximate Message Passing for Sparse Bayesian Learning

Paper and Code

Jan 25, 2021

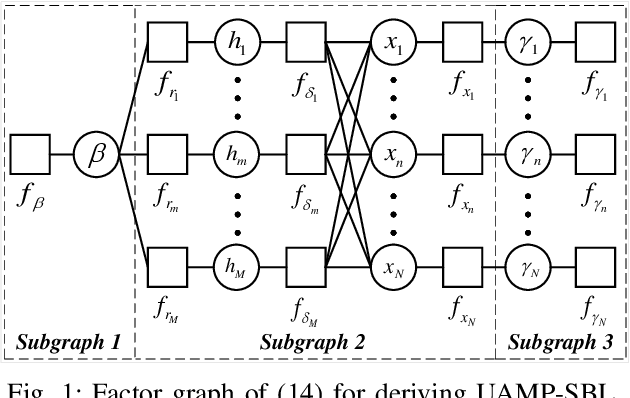

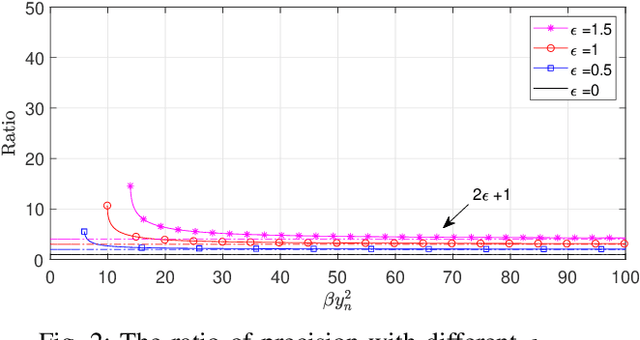

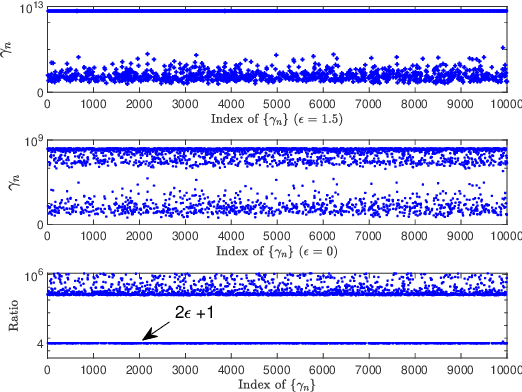

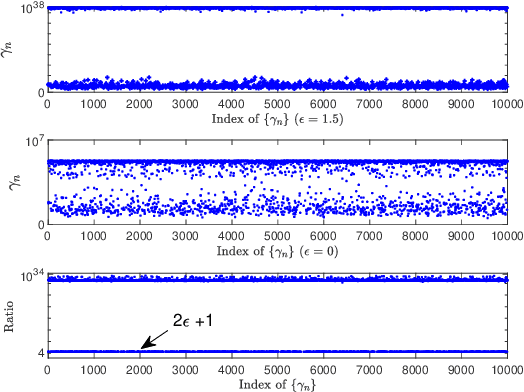

Sparse Bayesian learning (SBL) can be implemented with low complexity based on the approximate message passing (AMP) algorithm. However, it is vulnerable to 'difficult' measurement matrices, which may cause AMP to diverge. Damped AMP has been used for SBL to alleviate the problem at the cost of reducing convergence speed. In this work, we propose a new SBL algorithm based on structured variational inference, leveraging AMP with a unitary transformation (UAMP). Both single measurement vector and multiple measurement vector problems are investigated. It is shown that, compared to state-of-the-art AMP-based SBL algorithms, the proposed UAMPSBL is more robust and efficient, leading to remarkably better performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge