UniGAP: A Universal and Adaptive Graph Upsampling Approach to Mitigate Over-Smoothing in Node Classification Tasks

Paper and Code

Jul 28, 2024

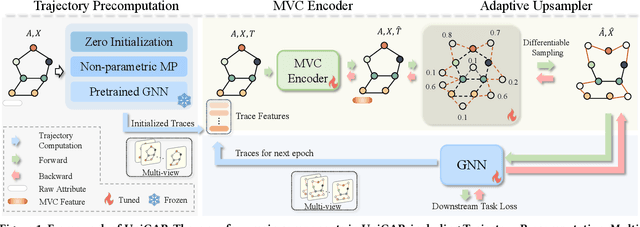

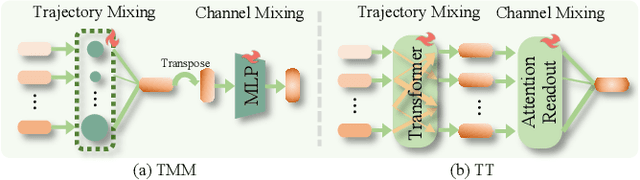

In the graph domain, deep graph networks based on Message Passing Neural Networks (MPNNs) or Graph Transformers often cause over-smoothing of node features, limiting their expressive capacity. Many upsampling techniques involving node and edge manipulation have been proposed to mitigate this issue. However, these methods often require extensive manual labor, resulting in suboptimal performance and lacking a universal integration strategy. In this study, we introduce UniGAP, a universal and adaptive graph upsampling technique for graph data. It provides a universal framework for graph upsampling, encompassing most current methods as variants. Moreover, UniGAP serves as a plug-in component that can be seamlessly and adaptively integrated with existing GNNs to enhance performance and mitigate the over-smoothing problem. Through extensive experiments, UniGAP demonstrates significant improvements over heuristic data augmentation methods across various datasets and metrics. We analyze how graph structure evolves with UniGAP, identifying key bottlenecks where over-smoothing occurs, and providing insights into how UniGAP addresses this issue. Lastly, we show the potential of combining UniGAP with large language models (LLMs) to further improve downstream performance. Our code is available at: https://github.com/wangxiaotang0906/UniGAP

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge