Understanding the Impact of Data Distribution on Q-learning with Function Approximation

Paper and Code

Nov 23, 2021

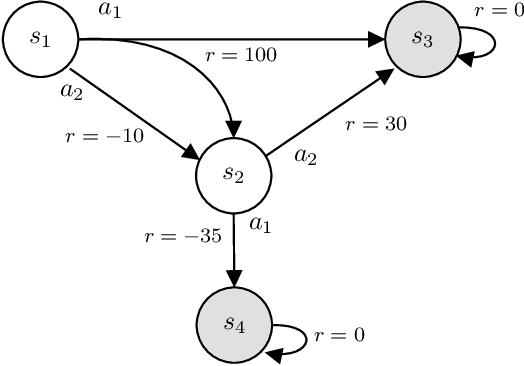

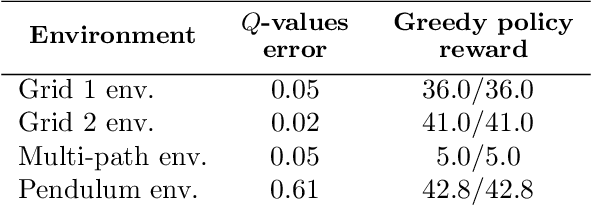

In this work, we focus our attention on the study of the interplay between the data distribution and Q-learning-based algorithms with function approximation. We provide a theoretical and empirical analysis as to why different properties of the data distribution can contribute to regulating sources of algorithmic instability. First, we revisit theoretical bounds on the performance of approximate dynamic programming algorithms. Second, we provide a novel four-state MDP that highlights the impact of the data distribution in the performance of a Q-learning algorithm with function approximation, both in online and offline settings. Finally, we experimentally assess the impact of the data distribution properties in the performance of an offline deep Q-network algorithm. Our results show that: (i) the data distribution needs to possess certain properties in order to robustly learn in an offline setting, namely low distance to the distributions induced by optimal policies of the MDP and high coverage over the state-action space; and (ii) high entropy data distributions can contribute to mitigating sources of algorithmic instability.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge