Understanding the Effect of Data Augmentation on Knowledge Distillation

Paper and Code

May 21, 2023

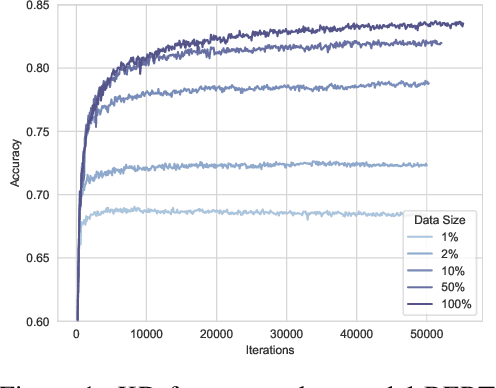

Knowledge distillation (KD) requires sufficient data to transfer knowledge from large-scale teacher models to small-scale student models. Therefore, data augmentation has been widely used to mitigate the shortage of data under specific scenarios. Classic data augmentation techniques, such as synonym replacement and k-nearest-neighbors, are initially designed for fine-tuning. To avoid severe semantic shifts and preserve task-specific labels, those methods prefer to change only a small proportion of tokens (e.g., changing 10% tokens is generally the best option for fine-tuning). However, such data augmentation methods are sub-optimal for knowledge distillation since the teacher model could provide label distributions and is more tolerant to semantic shifts. We first observe that KD prefers as much data as possible, which is different from fine-tuning that too much data will not gain more performance. Since changing more tokens leads to more semantic shifts, we use the proportion of changed tokens to reflect semantic shift degrees. Then we find that KD prefers augmented data with a larger semantic shift degree (e.g., changing 30% tokens is generally the best option for KD) than fine-tuning (changing 10% tokens). Besides, our findings show that smaller datasets prefer larger degrees until the out-of-distribution problem occurs (e.g., datasets with less than 10k inputs may prefer the 50% degree, and datasets with more than 100k inputs may prefer the 10% degree). Our work sheds light on the preference difference in data augmentation between fine-tuning and knowledge distillation and encourages the community to explore KD-specific data augmentation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge