Understanding Adversarial Imitation Learning in Small Sample Regime: A Stage-coupled Analysis

Paper and Code

Aug 03, 2022

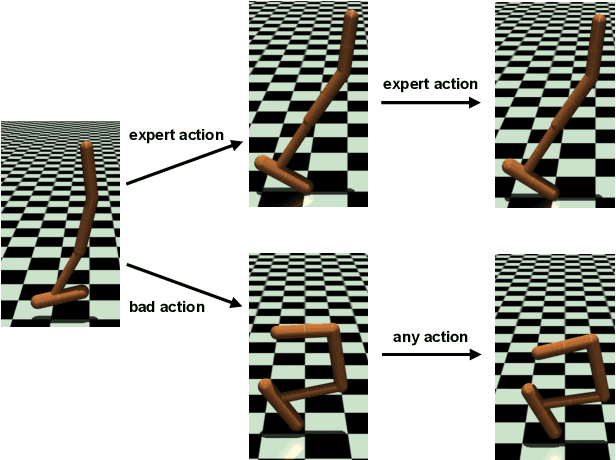

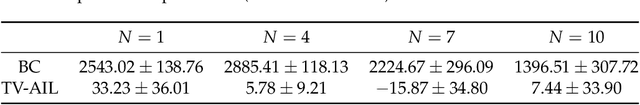

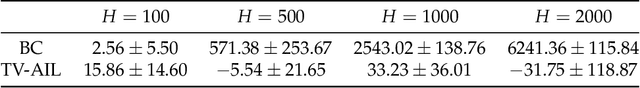

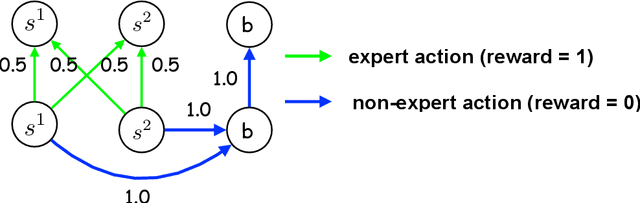

Imitation learning learns a policy from expert trajectories. While the expert data is believed to be crucial for imitation quality, it was found that a kind of imitation learning approach, adversarial imitation learning (AIL), can have exceptional performance. With as little as only one expert trajectory, AIL can match the expert performance even in a long horizon, on tasks such as locomotion control. There are two mysterious points in this phenomenon. First, why can AIL perform well with only a few expert trajectories? Second, why does AIL maintain good performance despite the length of the planning horizon? In this paper, we theoretically explore these two questions. For a total-variation-distance-based AIL (called TV-AIL), our analysis shows a horizon-free imitation gap $\mathcal O(\{\min\{1, \sqrt{|\mathcal S|/N} \})$ on a class of instances abstracted from locomotion control tasks. Here $|\mathcal S|$ is the state space size for a tabular Markov decision process, and $N$ is the number of expert trajectories. We emphasize two important features of our bound. First, this bound is meaningful in both small and large sample regimes. Second, this bound suggests that the imitation gap of TV-AIL is at most 1 regardless of the planning horizon. Therefore, this bound can explain the empirical observation. Technically, we leverage the structure of multi-stage policy optimization in TV-AIL and present a new stage-coupled analysis via dynamic programming

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge