Uncertainty-Guided Mutual Consistency Learning for Semi-Supervised Medical Image Segmentation

Paper and Code

Dec 05, 2021

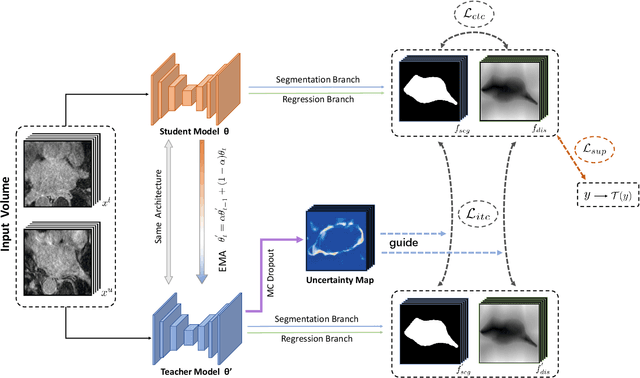

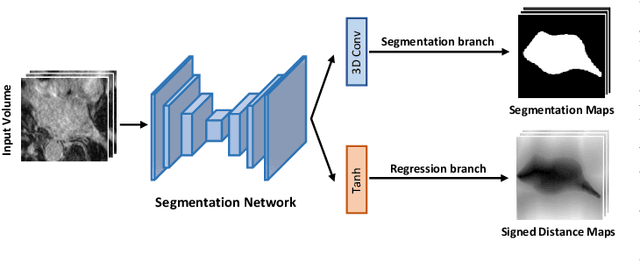

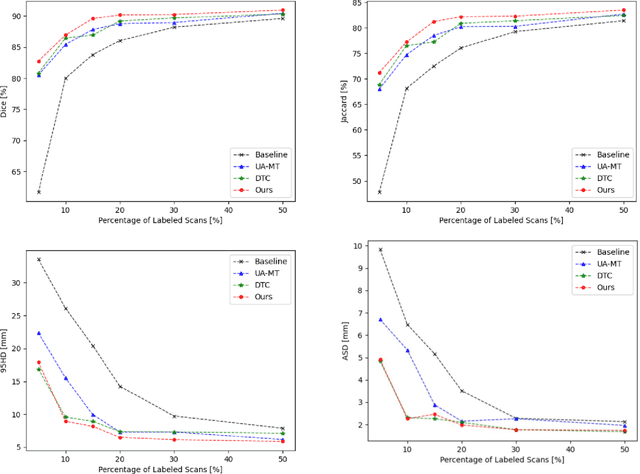

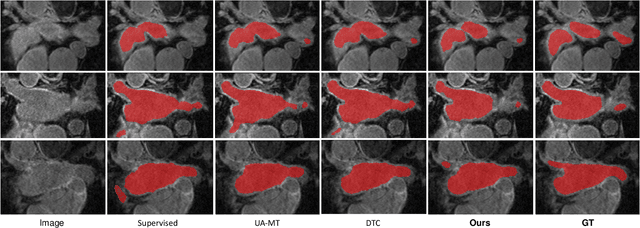

Medical image segmentation is a fundamental and critical step in many clinical approaches. Semi-supervised learning has been widely applied to medical image segmentation tasks since it alleviates the heavy burden of acquiring expert-examined annotations and takes the advantage of unlabeled data which is much easier to acquire. Although consistency learning has been proven to be an effective approach by enforcing an invariance of predictions under different distributions, existing approaches cannot make full use of region-level shape constraint and boundary-level distance information from unlabeled data. In this paper, we propose a novel uncertainty-guided mutual consistency learning framework to effectively exploit unlabeled data by integrating intra-task consistency learning from up-to-date predictions for self-ensembling and cross-task consistency learning from task-level regularization to exploit geometric shape information. The framework is guided by the estimated segmentation uncertainty of models to select out relatively certain predictions for consistency learning, so as to effectively exploit more reliable information from unlabeled data. We extensively validate our proposed method on two publicly available benchmark datasets: Left Atrium Segmentation (LA) dataset and Brain Tumor Segmentation (BraTS) dataset. Experimental results demonstrate that our method achieves performance gains by leveraging unlabeled data and outperforms existing semi-supervised segmentation methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge