Transfer Learning based Search Space Design for Hyperparameter Tuning

Paper and Code

Jun 06, 2022

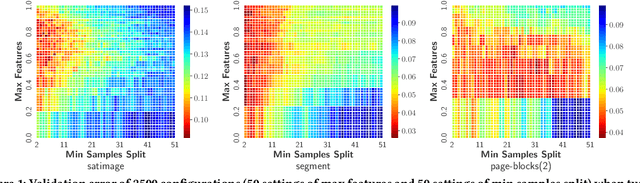

The tuning of hyperparameters becomes increasingly important as machine learning (ML) models have been extensively applied in data mining applications. Among various approaches, Bayesian optimization (BO) is a successful methodology to tune hyper-parameters automatically. While traditional methods optimize each tuning task in isolation, there has been recent interest in speeding up BO by transferring knowledge across previous tasks. In this work, we introduce an automatic method to design the BO search space with the aid of tuning history from past tasks. This simple yet effective approach can be used to endow many existing BO methods with transfer learning capabilities. In addition, it enjoys the three advantages: universality, generality, and safeness. The extensive experiments show that our approach considerably boosts BO by designing a promising and compact search space instead of using the entire space, and outperforms the state-of-the-arts on a wide range of benchmarks, including machine learning and deep learning tuning tasks, and neural architecture search.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge