Timely Communication from Sensors for Wireless Networked Control in Cloud-Based Digital Twins

Paper and Code

Aug 05, 2024

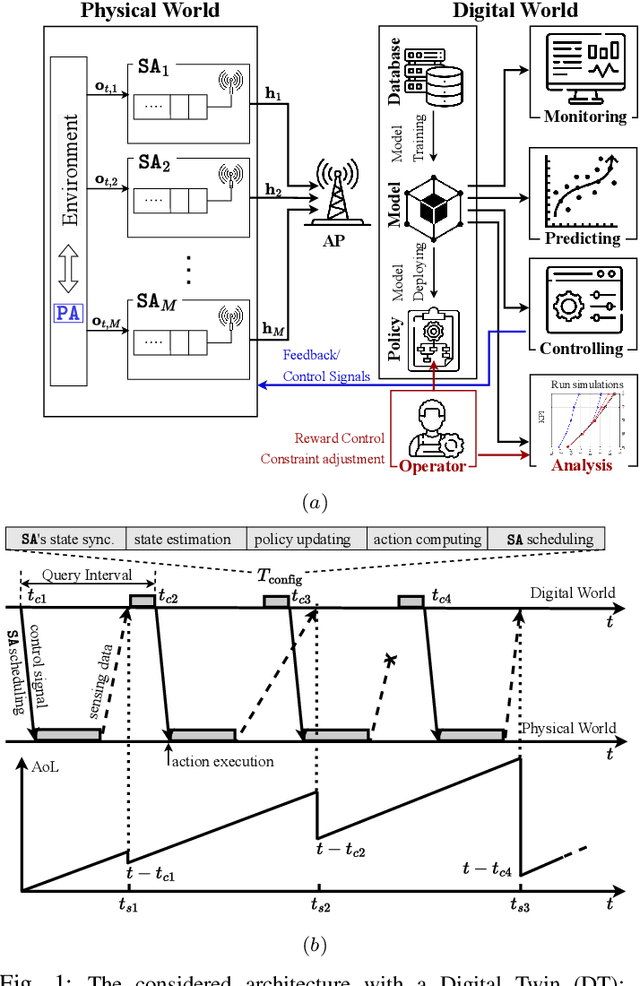

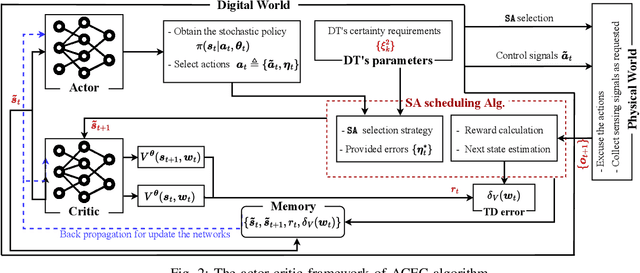

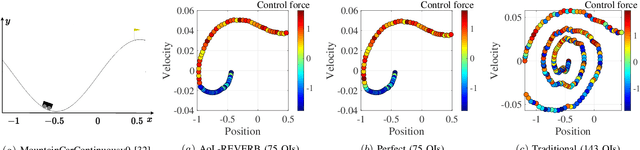

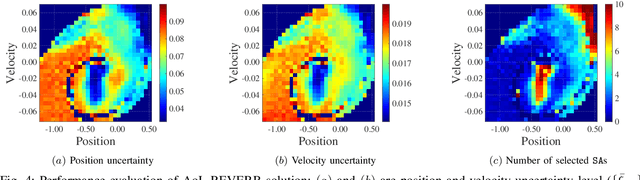

We consider a Wireless Networked Control System (WNCS) where sensors provide observations to build a DT model of the underlying system dynamics. The focus is on control, scheduling, and resource allocation for sensory observation to ensure timely delivery to the DT model deployed in the cloud. \phuc{Timely and relevant information, as characterized by optimized data acquisition policy and low latency, are instrumental in ensuring that the DT model can accurately estimate and predict system states. However, optimizing closed-loop control with DT and acquiring data for efficient state estimation and control computing pose a non-trivial problem given the limited network resources, partial state vector information, and measurement errors encountered at distributed sensing agents.} To address this, we propose the \emph{Age-of-Loop REinforcement learning and Variational Extended Kalman filter with Robust Belief (AoL-REVERB)}, which leverages an uncertainty-control reinforcement learning solution combined with an algorithm based on Value of Information (VoI) for performing optimal control and selecting the most informative sensors to satisfy the prediction accuracy of DT. Numerical results demonstrate that the DT platform can offer satisfactory performance while halving the communication overhead.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge