The trade-off between long-term memory and smoothness for recurrent networks

Paper and Code

Jul 01, 2019

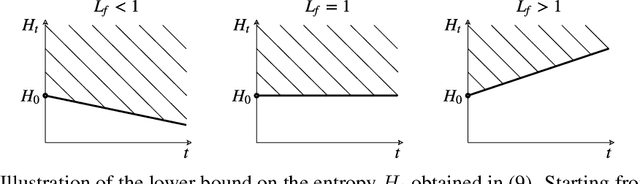

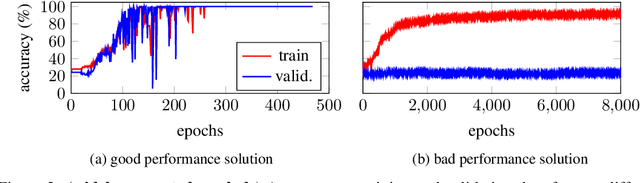

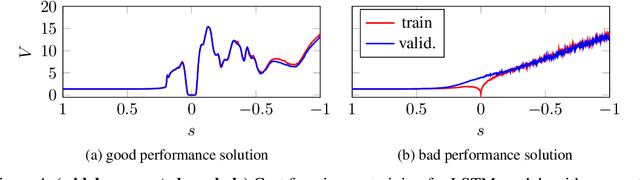

Training recurrent neural networks (RNNs) that possess long-term memory is challenging. We provide insight into the trade-off between the smoothness of the cost function and the memory retention capabilities of the network. We express both aspects in terms of the Lipschitz constant of the dynamics modeled by the network. This allows us to make a distinction between three types of regions in the parameter space. In the first region, the network experiences problems in retaining long-term information, while at the same time the cost function is smooth and easy for gradient descent to navigate in. In the second region, the amount of stored information increases with time and the cost function is intricate and full of local minima. The third region is in between the two other regions and here the RNN is able to retain long-term information. Based on these theoretical findings we present the hypothesis that good parameter choices for the RNN are located in between the well-behaved and the ill-behaved cost function regions. The concepts presented in the paper are illustrated by artificially generated and real examples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge