The signature of robot action success in EEG signals of a human observer: Decoding and visualization using deep convolutional neural networks

Paper and Code

Nov 16, 2017

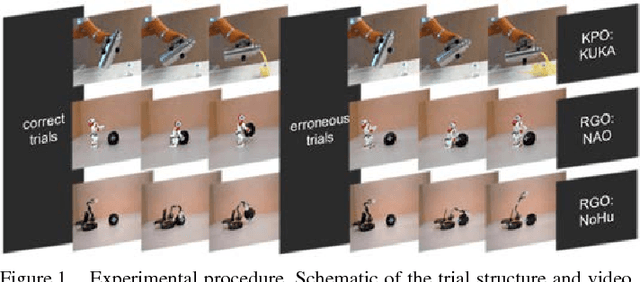

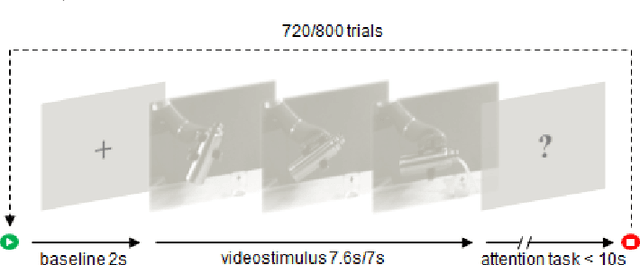

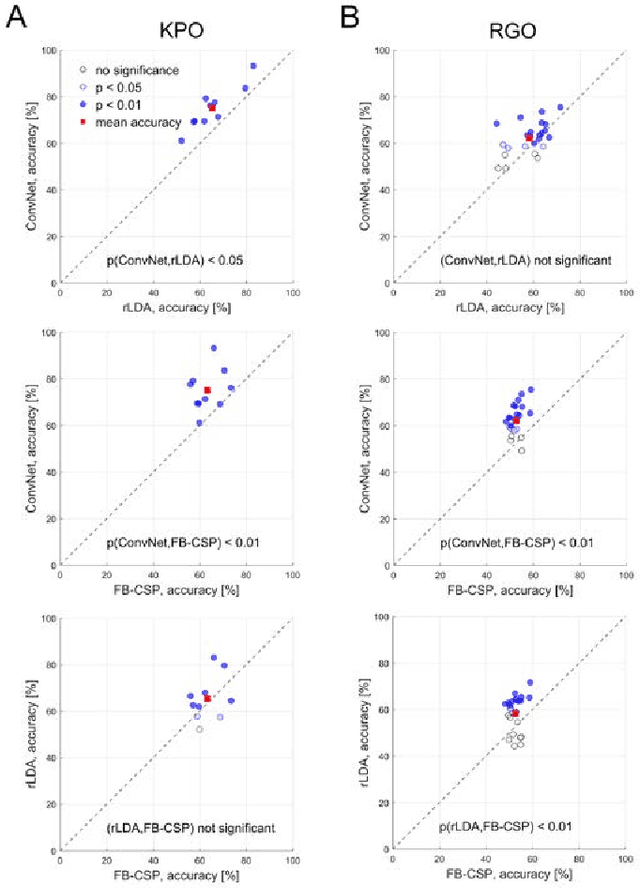

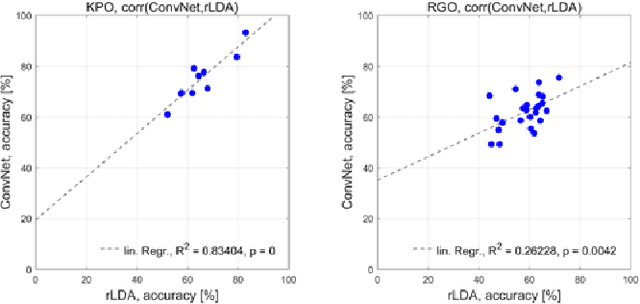

The importance of robotic assistive devices grows in our work and everyday life. Cooperative scenarios involving both robots and humans require safe human-robot interaction. One important aspect here is the management of robot errors, including fast and accurate online robot-error detection and correction. Analysis of brain signals from a human interacting with a robot may help identifying robot errors, but accuracies of such analyses have still substantial space for improvement. In this paper we evaluate whether a novel framework based on deep convolutional neural networks (deep ConvNets) could improve the accuracy of decoding robot errors from the EEG of a human observer, both during an object grasping and a pouring task. We show that deep ConvNets reached significantly higher accuracies than both regularized Linear Discriminant Analysis (rLDA) and filter bank common spatial patterns (FB-CSP) combined with rLDA, both widely used EEG classifiers. Deep ConvNets reached mean accuracies of 75% +/- 9 %, rLDA 65% +/- 10% and FB-CSP + rLDA 63% +/- 6% for decoding of erroneous vs. correct trials. Visualization of the time-domain EEG features learned by the ConvNets to decode errors revealed spatiotemporal patterns that reflected differences between the two experimental paradigms. Across subjects, ConvNet decoding accuracies were significantly correlated with those obtained with rLDA, but not CSP, indicating that in the present context ConvNets behaved more 'rLDA-like' (but consistently better), while in a previous decoding study with another task but the same ConvNet architecture, it was found to behave more 'CSP-like'. Our findings thus provide further support for the assumption that deep ConvNets are a versatile addition to the existing toolbox of EEG decoding techniques, and we discuss steps how ConvNet EEG decoding performance could be further optimized.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge