The impact of tissue detection on diagnostic artificial intelligence algorithms in digital pathology

Paper and Code

Mar 29, 2025

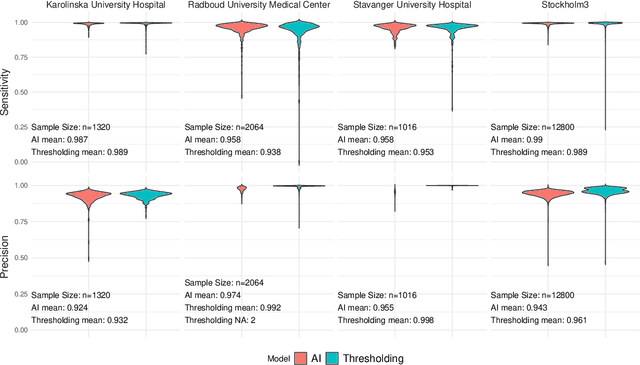

Tissue detection is a crucial first step in most digital pathology applications. Details of the segmentation algorithm are rarely reported, and there is a lack of studies investigating the downstream effects of a poor segmentation algorithm. Disregarding tissue detection quality could create a bottleneck for downstream performance and jeopardize patient safety if diagnostically relevant parts of the specimen are excluded from analysis in clinical applications. This study aims to determine whether performance of downstream tasks is sensitive to the tissue detection method, and to compare performance of classical and AI-based tissue detection. To this end, we trained an AI model for Gleason grading of prostate cancer in whole slide images (WSIs) using two different tissue detection algorithms: thresholding (classical) and UNet++ (AI). A total of 33,823 WSIs scanned on five digital pathology scanners were used to train the tissue detection AI model. The downstream Gleason grading algorithm was trained and tested using 70,524 WSIs from 13 clinical sites scanned on 13 different scanners. There was a decrease from 116 (0.43%) to 22 (0.08%) fully undetected tissue samples when switching from thresholding-based tissue detection to AI-based, suggesting an AI model may be more reliable than a classical model for avoiding total failures on slides with unusual appearance. On the slides where tissue could be detected by both algorithms, no significant difference in overall Gleason grading performance was observed. However, tissue detection dependent clinically significant variations in AI grading were observed in 3.5% of malignant slides, highlighting the importance of robust tissue detection for optimal clinical performance of diagnostic AI.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge