The Expressive Power of Word Embeddings

Paper and Code

May 29, 2013

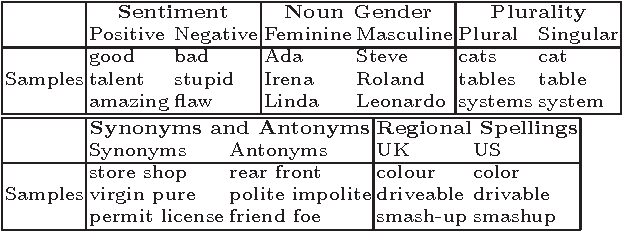

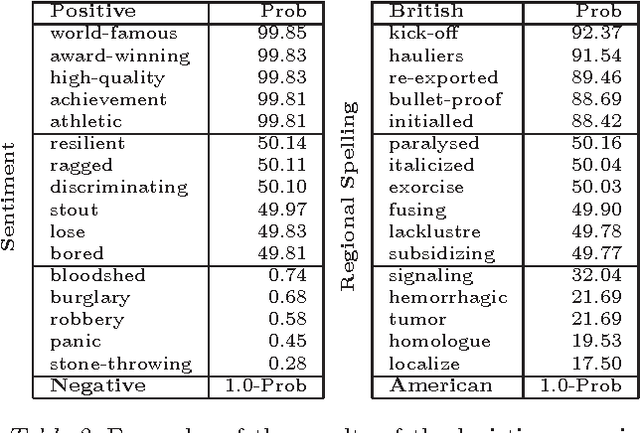

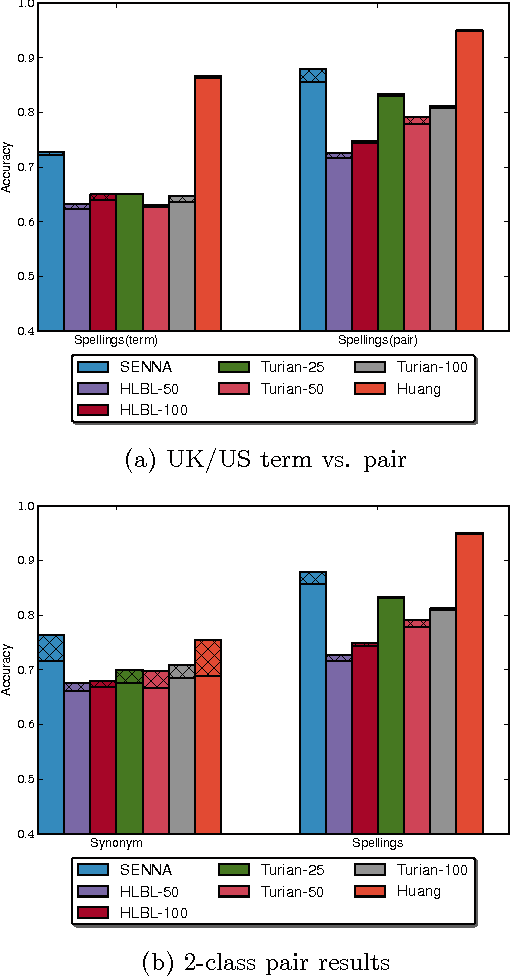

We seek to better understand the difference in quality of the several publicly released embeddings. We propose several tasks that help to distinguish the characteristics of different embeddings. Our evaluation of sentiment polarity and synonym/antonym relations shows that embeddings are able to capture surprisingly nuanced semantics even in the absence of sentence structure. Moreover, benchmarking the embeddings shows great variance in quality and characteristics of the semantics captured by the tested embeddings. Finally, we show the impact of varying the number of dimensions and the resolution of each dimension on the effective useful features captured by the embedding space. Our contributions highlight the importance of embeddings for NLP tasks and the effect of their quality on the final results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge