Tencent AVS: A Holistic Ads Video Dataset for Multi-modal Scene Segmentation

Paper and Code

Dec 09, 2022

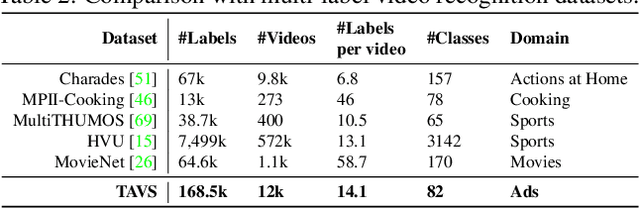

Temporal video segmentation and classification have been advanced greatly by public benchmarks in recent years. However, such research still mainly focuses on human actions, failing to describe videos in a holistic view. In addition, previous research tends to pay much attention to visual information yet ignores the multi-modal nature of videos. To fill this gap, we construct the Tencent `Ads Video Segmentation'~(TAVS) dataset in the ads domain to escalate multi-modal video analysis to a new level. TAVS describes videos from three independent perspectives as `presentation form', `place', and `style', and contains rich multi-modal information such as video, audio, and text. TAVS is organized hierarchically in semantic aspects for comprehensive temporal video segmentation with three levels of categories for multi-label classification, e.g., `place' - `working place' - `office'. Therefore, TAVS is distinguished from previous temporal segmentation datasets due to its multi-modal information, holistic view of categories, and hierarchical granularities. It includes 12,000 videos, 82 classes, 33,900 segments, 121,100 shots, and 168,500 labels. Accompanied with TAVS, we also present a strong multi-modal video segmentation baseline coupled with multi-label class prediction. Extensive experiments are conducted to evaluate our proposed method as well as existing representative methods to reveal key challenges of our dataset TAVS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge