Temporal Pyramid Pooling Based Convolutional Neural Networks for Action Recognition

Paper and Code

Apr 16, 2015

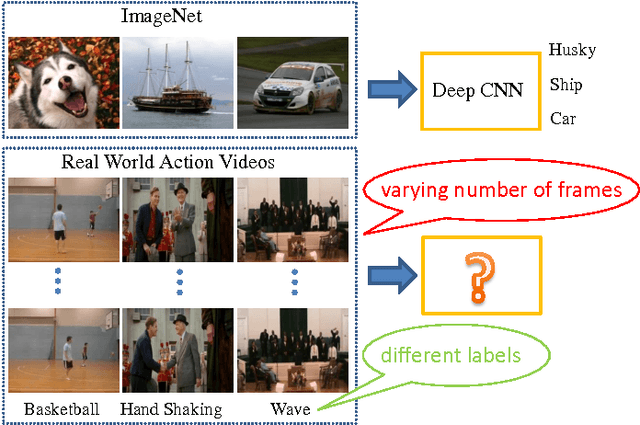

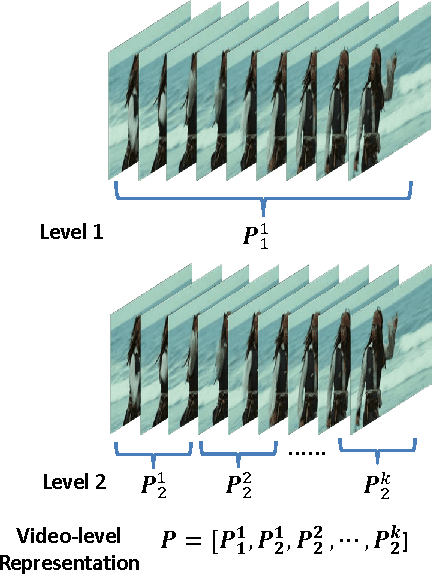

Encouraged by the success of Convolutional Neural Networks (CNNs) in image classification, recently much effort is spent on applying CNNs to video based action recognition problems. One challenge is that video contains a varying number of frames which is incompatible to the standard input format of CNNs. Existing methods handle this issue either by directly sampling a fixed number of frames or bypassing this issue by introducing a 3D convolutional layer which conducts convolution in spatial-temporal domain. To solve this issue, here we propose a novel network structure which allows an arbitrary number of frames as the network input. The key of our solution is to introduce a module consisting of an encoding layer and a temporal pyramid pooling layer. The encoding layer maps the activation from previous layers to a feature vector suitable for pooling while the temporal pyramid pooling layer converts multiple frame-level activations into a fixed-length video-level representation. In addition, we adopt a feature concatenation layer which combines appearance information and motion information. Compared with the frame sampling strategy, our method avoids the risk of missing any important frames. Compared with the 3D convolutional method which requires a huge video dataset for network training, our model can be learned on a small target dataset because we can leverage the off-the-shelf image-level CNN for model parameter initialization. Experiments on two challenging datasets, Hollywood2 and HMDB51, demonstrate that our method achieves superior performance over state-of-the-art methods while requiring much fewer training data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge