Sympathy Begins with a Smile, Intelligence Begins with a Word: Use of Multimodal Features in Spoken Human-Robot Interaction

Paper and Code

Jun 08, 2017

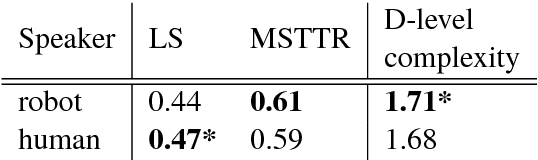

Recognition of social signals, from human facial expressions or prosody of speech, is a popular research topic in human-robot interaction studies. There is also a long line of research in the spoken dialogue community that investigates user satisfaction in relation to dialogue characteristics. However, very little research relates a combination of multimodal social signals and language features detected during spoken face-to-face human-robot interaction to the resulting user perception of a robot. In this paper we show how different emotional facial expressions of human users, in combination with prosodic characteristics of human speech and features of human-robot dialogue, correlate with users' impressions of the robot after a conversation. We find that happiness in the user's recognised facial expression strongly correlates with likeability of a robot, while dialogue-related features (such as number of human turns or number of sentences per robot utterance) correlate with perceiving a robot as intelligent. In addition, we show that facial expression, emotional features, and prosody are better predictors of human ratings related to perceived robot likeability and anthropomorphism, while linguistic and non-linguistic features more often predict perceived robot intelligence and interpretability. As such, these characteristics may in future be used as an online reward signal for in-situ Reinforcement Learning based adaptive human-robot dialogue systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge