Supporting Video Queries on Zero-Streaming Cameras

Paper and Code

Apr 30, 2019

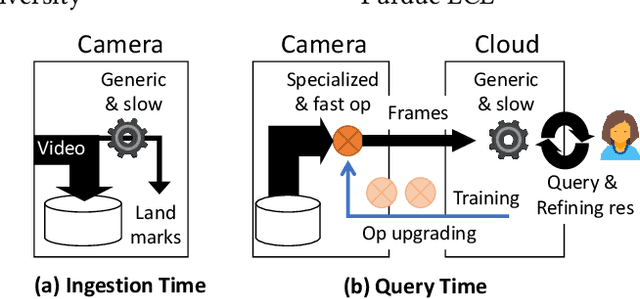

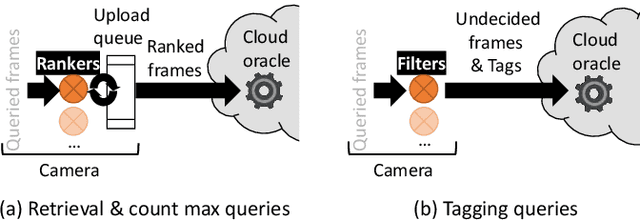

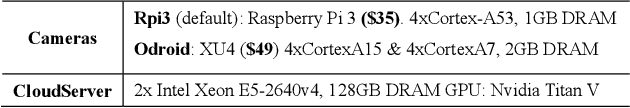

As low-cost surveillance cameras proliferate, we advocate for these cameras to be zero streaming: ingesting videos directly to their local storage and only communicating with the cloud in response to queries. To support queries over videos stored on zero-streaming cameras, we describe a system that spans the cloud and cameras. The system builds on two unconventional ideas. When ingesting video frames, a camera learns accurate knowledge on a sparse sample of frames, rather than learning inaccurate knowledge on all frames; in executing one query, a camera processes frames in multiple passes with multiple operators trained and picked by the cloud during the query, rather than one-pass processing with operator(s) decided ahead of the query. On diverse queries over 720-hour videos and with typical wireless network bandwidth and low-cost camera hardware, our system runs at more than 100x video realtime. It outperforms competitive alternative designs by at least 4x and up to two orders of magnitude.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge