Strategic Evaluation: Subjects, Evaluators, and Society

Paper and Code

Oct 05, 2023

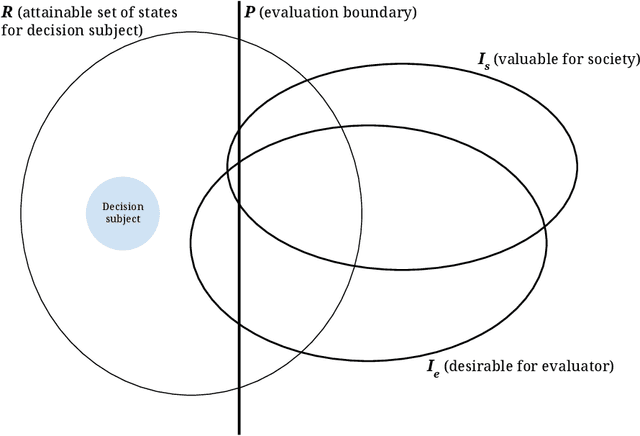

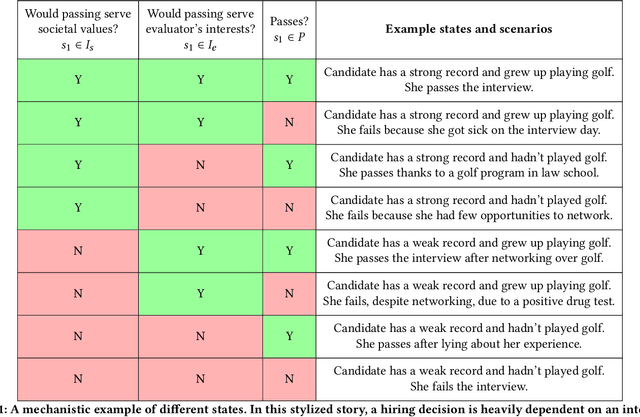

A broad current application of algorithms is in formal and quantitative measures of murky concepts -- like merit -- to make decisions. When people strategically respond to these sorts of evaluations in order to gain favorable decision outcomes, their behavior can be subjected to moral judgments. They may be described as 'gaming the system' or 'cheating,' or (in other cases) investing 'honest effort' or 'improving.' Machine learning literature on strategic behavior has tried to describe these dynamics by emphasizing the efforts expended by decision subjects hoping to obtain a more favorable assessment -- some works offer ways to preempt or prevent such manipulations, some differentiate 'gaming' from 'improvement' behavior, while others aim to measure the effort burden or disparate effects of classification systems. We begin from a different starting point: that the design of an evaluation itself can be understood as furthering goals held by the evaluator which may be misaligned with broader societal goals. To develop the idea that evaluation represents a strategic interaction in which both the evaluator and the subject of their evaluation are operating out of self-interest, we put forward a model that represents the process of evaluation using three interacting agents: a decision subject, an evaluator, and society, representing a bundle of values and oversight mechanisms. We highlight our model's applicability to a number of social systems where one or two players strategically undermine the others' interests to advance their own. Treating evaluators as themselves strategic allows us to re-cast the scrutiny directed at decision subjects, towards the incentives that underpin institutional designs of evaluations. The moral standing of strategic behaviors often depend on the moral standing of the evaluations and incentives that provoke such behaviors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge