Split Time Series into Patches: Rethinking Long-term Series Forecasting with Dateformer

Paper and Code

Jul 12, 2022

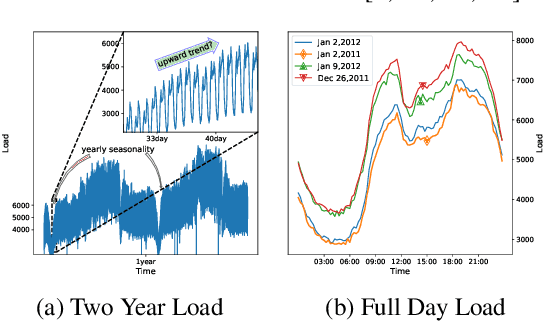

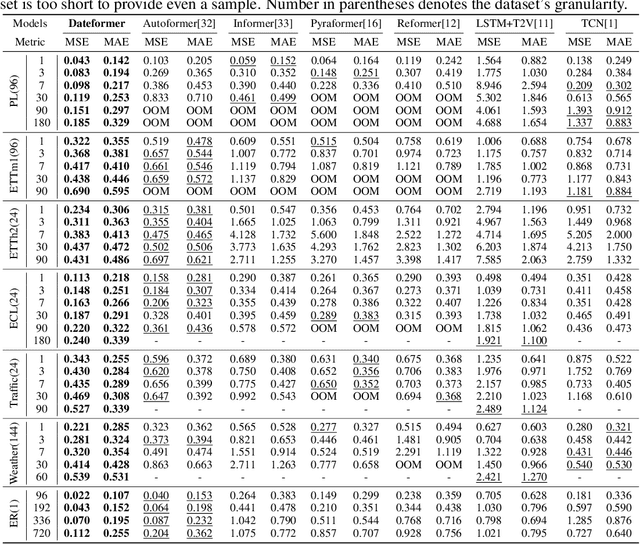

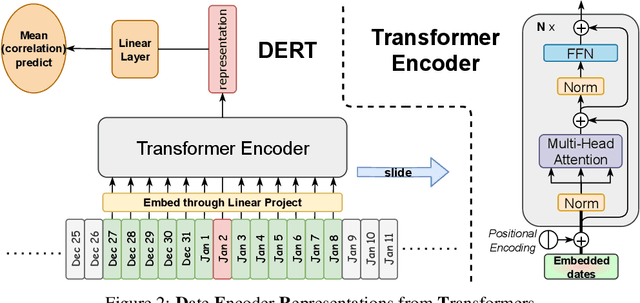

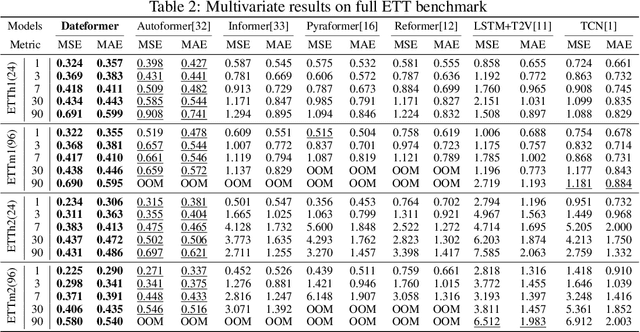

Time is one of the most significant characteristics of time-series, yet has received insufficient attention. Prior time-series forecasting research has mainly focused on mapping a past subseries (lookback window) to a future series (forecast window), and time of series often just play an auxiliary role even completely ignored in most cases. Due to the point-wise processing within these windows, extrapolating series to longer-term future is tough in the pattern. To overcome this barrier, we propose a brand-new time-series forecasting framework named Dateformer who turns attention to modeling time instead of following the above practice. Specifically, time-series are first split into patches by day to supervise the learning of dynamic date-representations with Date Encoder Representations from Transformers (DERT). These representations are then fed into a simple decoder to produce a coarser (or global) prediction, and used to help the model seek valuable information from the lookback window to learn a refined (or local) prediction. Dateformer obtains the final result by summing the above two parts. Our empirical studies on seven benchmarks show that the time-modeling method is more efficient for long-term series forecasting compared with sequence modeling methods. Dateformer yields state-of-the-art accuracy with a 40% remarkable relative improvement, and broadens the maximum credible forecasting range to a half-yearly level.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge