SpikeSEE: An Energy-Efficient Dynamic Scenes Processing Framework for Retinal Prostheses

Paper and Code

Sep 16, 2022

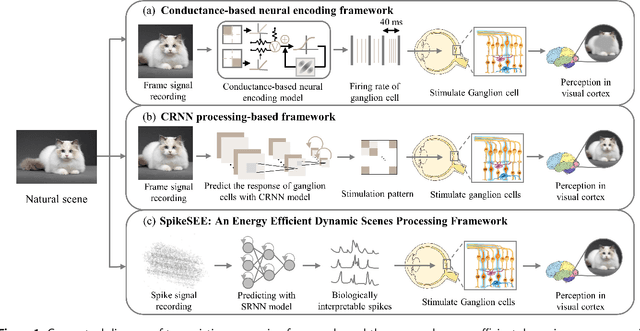

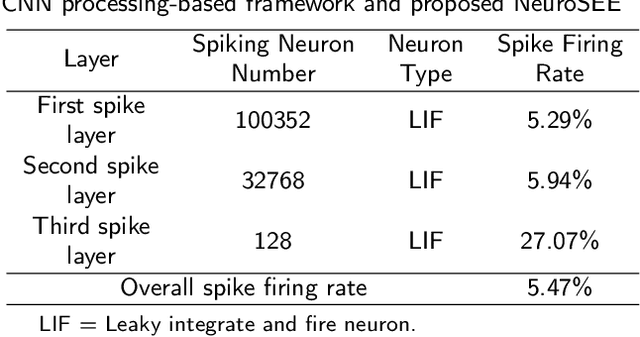

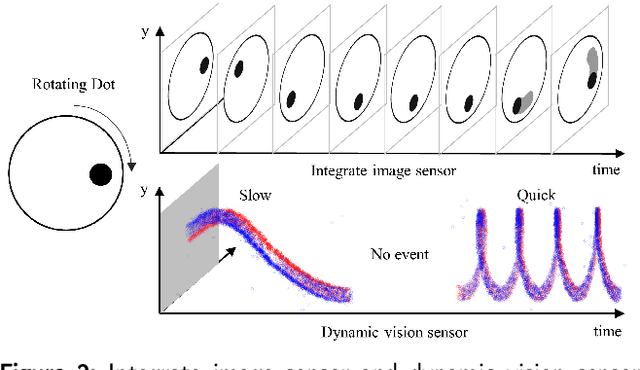

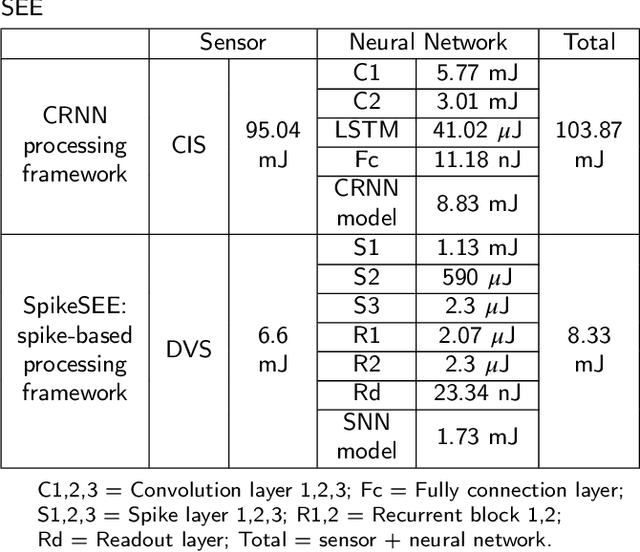

Intelligent and low-power retinal prostheses are highly demanded in this era, where wearable and implantable devices are used for numerous healthcare applications. In this paper, we propose an energy-efficient dynamic scenes processing framework (SpikeSEE) that combines a spike representation encoding technique and a bio-inspired spiking recurrent neural network (SRNN) model to achieve intelligent processing and extreme low-power computation for retinal prostheses. The spike representation encoding technique could interpret dynamic scenes with sparse spike trains, decreasing the data volume. The SRNN model, inspired by the human retina special structure and spike processing method, is adopted to predict the response of ganglion cells to dynamic scenes. Experimental results show that the Pearson correlation coefficient of the proposed SRNN model achieves 0.93, which outperforms the state of the art processing framework for retinal prostheses. Thanks to the spike representation and SRNN processing, the model can extract visual features in a multiplication-free fashion. The framework achieves 12 times power reduction compared with the convolutional recurrent neural network (CRNN) processing-based framework. Our proposed SpikeSEE predicts the response of ganglion cells more accurately with lower energy consumption, which alleviates the precision and power issues of retinal prostheses and provides a potential solution for wearable or implantable prostheses.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge