SpectralDefense: Detecting Adversarial Attacks on CNNs in the Fourier Domain

Paper and Code

Mar 04, 2021

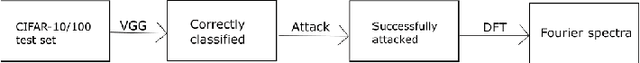

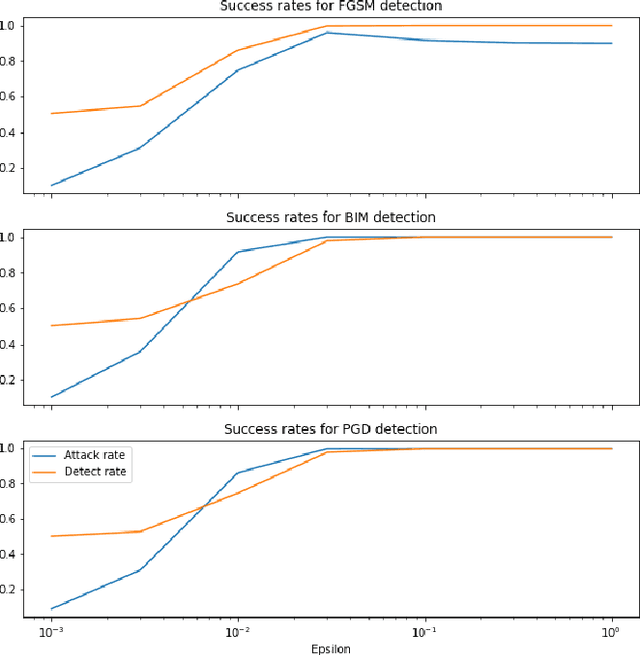

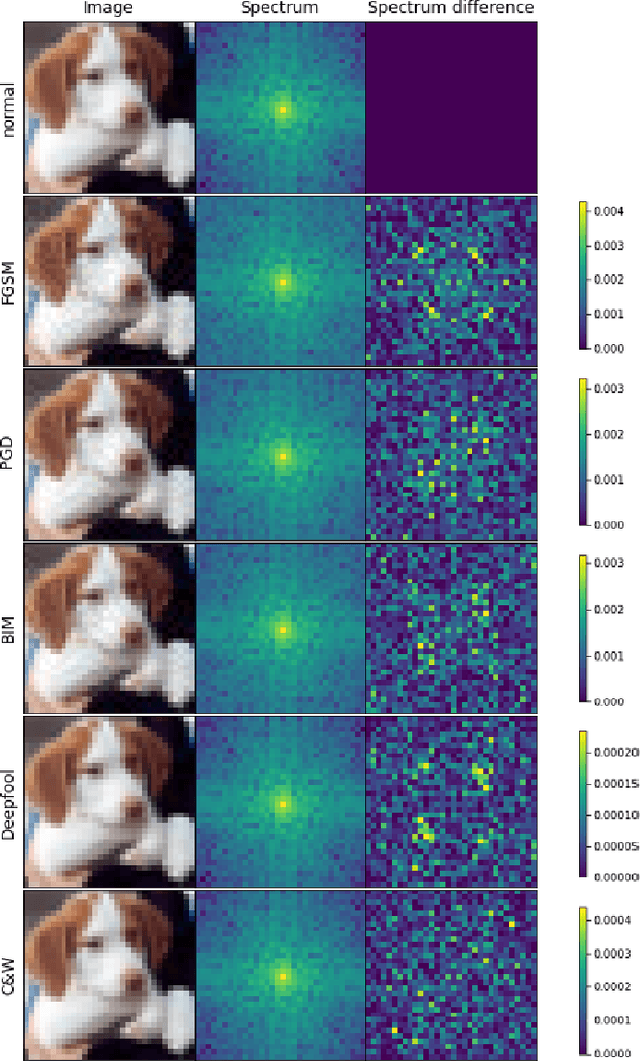

Despite the success of convolutional neural networks (CNNs) in many computer vision and image analysis tasks, they remain vulnerable against so-called adversarial attacks: Small, crafted perturbations in the input images can lead to false predictions. A possible defense is to detect adversarial examples. In this work, we show how analysis in the Fourier domain of input images and feature maps can be used to distinguish benign test samples from adversarial images. We propose two novel detection methods: Our first method employs the magnitude spectrum of the input images to detect an adversarial attack. This simple and robust classifier can successfully detect adversarial perturbations of three commonly used attack methods. The second method builds upon the first and additionally extracts the phase of Fourier coefficients of feature-maps at different layers of the network. With this extension, we are able to improve adversarial detection rates compared to state-of-the-art detectors on five different attack methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge