Sparse Over-complete Patch Matching

Paper and Code

Jun 09, 2018

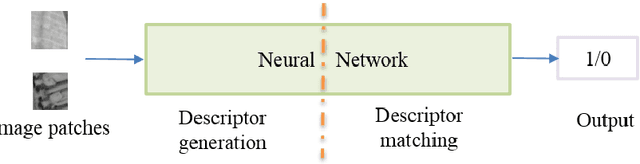

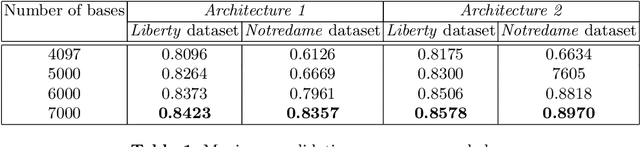

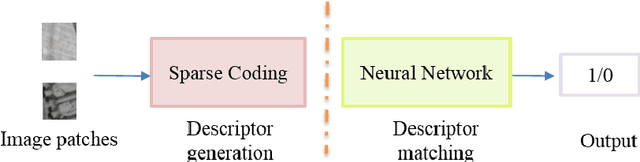

Image patch matching, which is the process of identifying corresponding patches across images, has been used as a subroutine for many computer vision and image processing tasks. State -of-the-art patch matching techniques take image patches as input to a convolutional neural network to extract the patch features and evaluate their similarity. Our aim in this paper is to improve on the state of the art patch matching techniques by observing the fact that a sparse-overcomplete representation of an image posses statistical properties of natural visual scenes which can be exploited for patch matching. We propose a new paradigm which encodes image patch details by encoding the patch and subsequently using this sparse representation as input to a neural network to compare the patches. As sparse coding is based on a generative model of natural image patches, it can represent the patch in terms of the fundamental visual components from which it has been composed of, leading to similar sparse codes for patches which are built from similar components. Once the sparse coded features are extracted, we employ a fully-connected neural network, which captures the non-linear relationships between features, for comparison. We have evaluated our approach using the Liberty and Notredame subsets of the popular UBC patch dataset and set a new benchmark outperforming all state-of-the-art patch matching techniques for these datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge